Title : An Overview of Processing-in-Memory Circuits for Artificial Intelligence and Machine Learning

Authors : Donghyuk Kim, Chengshuo Yu, Shanshan Xie, Yuzong Chen, Joo-Young Kim, Bongjin Kim, Jaydeep Kulkarni, Tony Tae-Hyoung Kim

Publications : IEEE Journal on Emerging and Selected Topics in Circuits and Systems (JETCAS), 2022

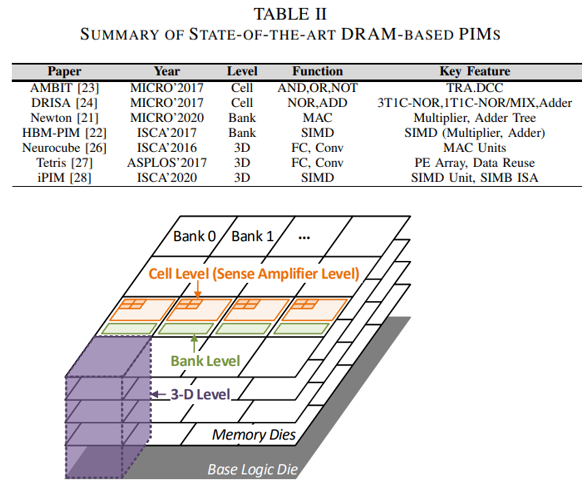

Artificial intelligence (AI) and machine learning (ML) are revolutionizing many fields of study, such as visual recognition, natural language processing, autonomous vehicles, and prediction. Traditional von-Neumann computing architecture with separated processing elements and memory devices have been improving their computing performances rapidly with the scaling of process technology. However, in the era of AI and ML, data transfer between memory devices and processing elements becomes the bottleneck of the system. To address this data movement issue, memory-centric computing takes an approach of merging the memory devices with processing elements so that computations can be done in the same location without moving any data. Processing-In-Memory (PIM) has attracted research community’s attention because it can improve the energy efficiency of memory-centric computing systems substantially by minimizing the data movement. Even though the benefits of PIM are well accepted, its limitations and challenges have not been investigated thoroughly. This paper presents a comprehensive investigation of state-of-the-art PIM research works based on various memory device types, such as static-random-access-memory (SRAM), dynamic-random-access-memory (DRAM), and resistive memory (ReRAM). We will present the overview of PIM designs in each memory type, covering from bit cells, circuits, and architecture. Then, a new software stack standard and its challenges for incorporating PIM with the conventional computing architecture will be discussed. Finally, we will discuss various future research directions in PIM for further reducing the data conversion overhead, improving