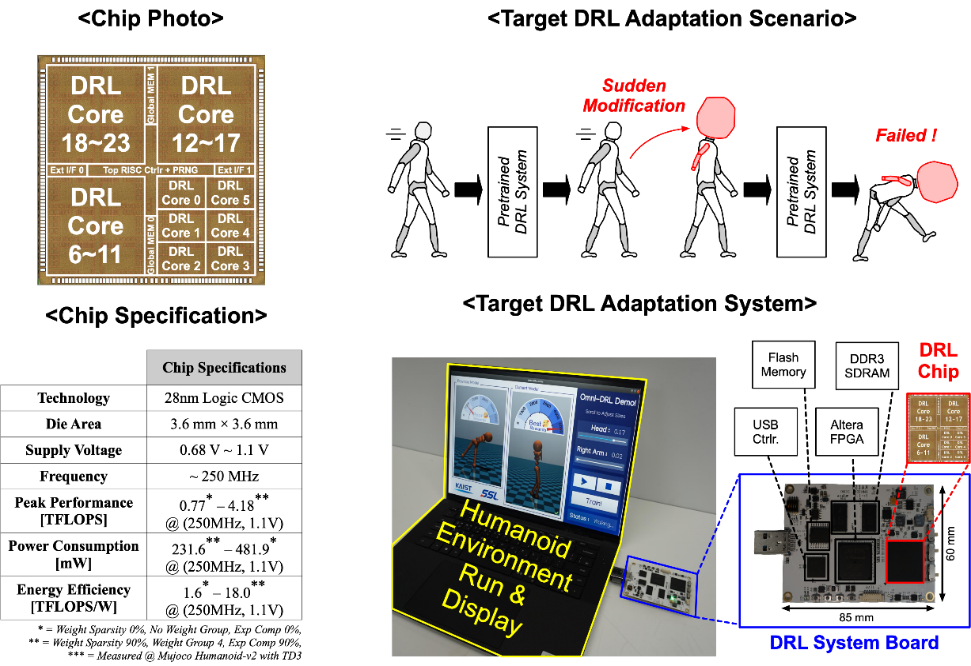

We present an energy-efficient deep reinforcement learning (DRL) processor, OmniDRL, for DRL training on edge devices. Recently, the need for DRL training is growing due to the DRL’s distinct characteristics that can be adapted to each user. However, a massive amount of external and internal memory access limits the implementation of DRL training on resource-constrained platforms. OmniDRL proposes 4 key features that can reduce external memory access by compressing as much data as possible, and can reduce internal memory access by directly processing compressed data. A group-sparse training enables a high weight compression ratio for every DRL iteration. A group-sparse training core is proposed to fully take advantage of compressed weight from GST. An exponent mean delta encoding additionally compresses exponent of both weight and feature map. A world-first on-chip sparse-weight-transposer enables the DRL training process of compressed weight without off-chip transposer. As a result, OmniDRL is fabricated in 28nm CMOS technology and occupies a 3.6×3.6 mm2 die area. It achieved 7.42 TFLOPS/W energy efficiency for training robot agent (Mujoco Halfcheetah, TD3), which is 2.4× higher than the previous state-of-the-art.

Related papers:

- Lee et al., “OmniDRL: An Energy-Efficient Deep Reinforcement Learning Processor With Dual-Mode Weight Compression and Sparse Weight Transposer,” in IEEE Journal of Solid-State Circuits, April 2022

- Lee et al., “OmniDRL: An Energy-Efficient Mobile Deep Reinforcement Learning Accelerators with Dual-mode Weight Compression and Direct Processing of Compressed Data”, 2021 IEEE Hot Chips 33 Symposium (HCS), 2021

- Lee et al., “OmniDRL: A 29.3 TFLOPS/W Deep Reinforcement Learning Processor with Dual-mode Weight Compression and On-chip Sparse Weight Transposer,” 2021 Symposium on VLSI Circuits, 2021

- Lee et al., “Low-power Autonomous Adaptation System with Deep Reinforcement Learning,” 2022 AICAS, 2022

- Lee et al., “Energy-Efficient Deep Reinforcement Learning Accelerator Designs for Mobile Autonomous Systems,” 2021 AICAS, 2021