Authors: Dong-Jun Han*, Jungwuk Park*, Seokil Ham, Namjin Lee, Jaekyun Moon (*=equal contribution)

Journal: IEEE Transactions on Neural Networks and Learning Systems, 2023

Abstract:

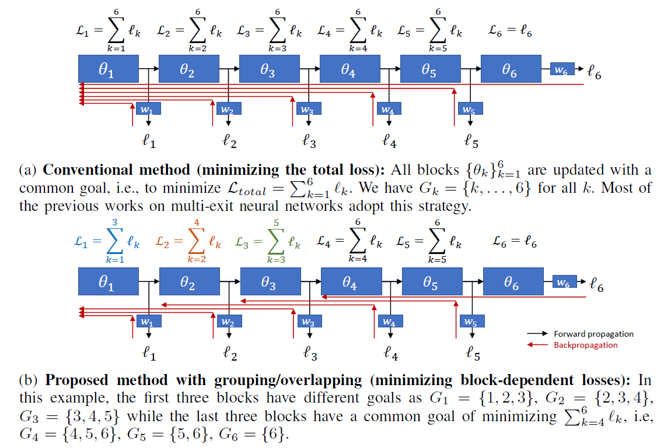

As the size of a model increases, making predictions using deep neural networks is becoming more computationally expensive. Multi-exit neural network is one promising solution that can flexibly make anytime predictions via early exits, depending on the current test-time budget which may vary over time in practice (e.g., self-driving cars with dynamically changing speeds). However, the prediction performance at the earlier exits are generally much lower than the final exit, which becomes a critical issue in low-latency applications having a tight test-time budget. Compared to the previous works where each block is optimized to minimize the losses of all exits simultaneously, in this work, we propose a new method for training multi-exit neural networks by strategically imposing different objectives to individual blocks. The proposed idea based on grouping and overlapping strategies improves the prediction performance at the earlier exits while not degrading the performance of later ones, making our scheme to be more suitable for low-latency applications. Extensive experimental results on both image classification and semantic segmentation confirm the advantage of our approach. The proposed idea does not require any modifications in the model architecture, and can be easily combined with existing strategies aiming to improve the performance of multi-exit neural networks.