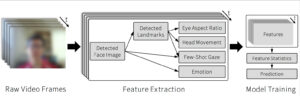

The importance of online education has been brought to the forefront due to COVID. Understanding students’ attentional states are crucial for lecturers, but this could be more difficult in online settings than in physical classrooms. Existing methods that gauge online students’ attention status typically require specialized sensors such as eye-trackers and thus are not easily deployable to every student in real-world settings. To tackle this problem, we utilize facial video from student webcams for attention state prediction in online lectures. We conduct an experiment in the wild with 37 participants, resulting in a dataset consisting of 15 hours of lecture-taking students’ facial recordings with corresponding 1,100 attentional state probings. We present PAFE (Predicting Attention with Facial Expression), a facial-video-based framework for attentional state prediction that focuses on the vision-based representation of traditional physiological mind-wandering features related to partial drowsiness, emotion, and gaze. Our model only requires a single camera and outperforms gaze-only baselines.