[Title]

Learning-driven exploration for reinforcement learning

[Authors]

Muhammad Usama, Dong Eui Chang

[Abstract]

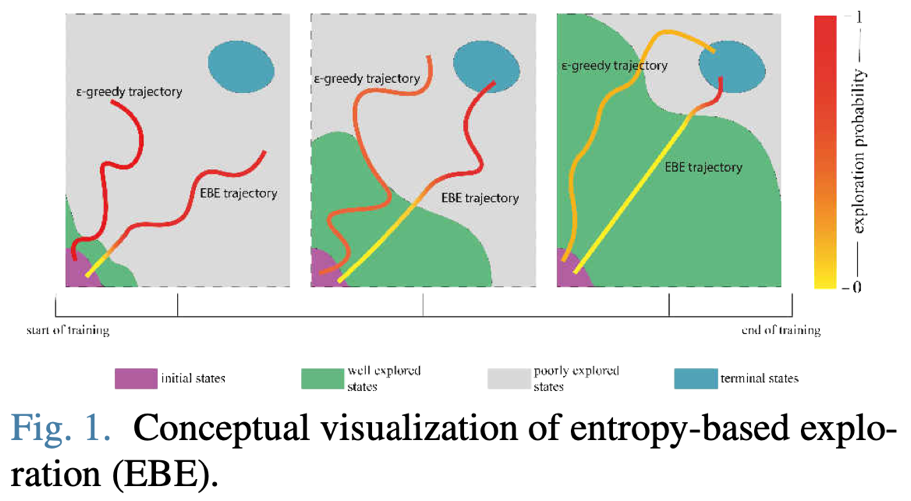

Effective and intelligent exploration remains an unresolved problem for reinforcement learning. Most contemporary reinforcement learning relies on simple heuristic strategies which are unable to intelligently distinguish the well-explored and the unexplored regions of state space, which can lead to inefficient use of training time. We introduce entropy-based exploration (EBE) that enables an agent to explore efficiently the unexplored regions of state space. EBE quantifies the agent’s learning in a state using state-dependent action values and adaptively explores the state space, i.e. more exploration for the unexplored region of the state space. We perform experiments on a diverse set of environments and demonstrate that EBE enables efficient exploration that ultimately results in faster learning without having to tune any hyperparameter. The code to reproduce the experiments is given at https://github.com/Usama1002/ EBE-Exploration and the supplementary video is given at https://youtu.be/nJggIjjzKic.