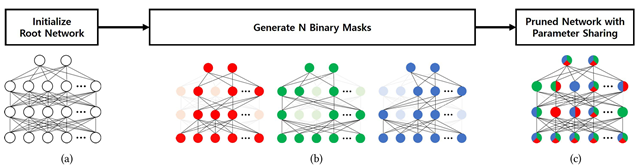

Title: Parameter Sharing with Network Pruning for Scalable Multi-Agent Deep Reinforcement Learning

Venue: AAMAS 2023

Abstract: Handling the problem of scalability is one of the essential issues for multi-agent reinforcement learning (MARL) algorithms to be applied to real-world problems typically involving massively many agents. For this, parameter sharing across multiple agents has widely been used since it reduces the training time by decreasing the number of parameters and increasing the sample efficiency. However, using the same parameters across agents limits the representational capacity of the joint policy and consequently, the performance can be degraded in multi-agent tasks that require different behaviors for different agents. In this paper, we propose a simple method that adopts structured pruning for a deep neural network to increase the representational capacity of the joint policy without introducing additional parameters. We evaluate the proposed method on several benchmark tasks, and numerical results show that the proposed method significantly outperforms other parameter-sharing methods.

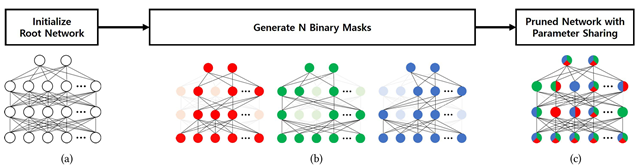

Main Figure:

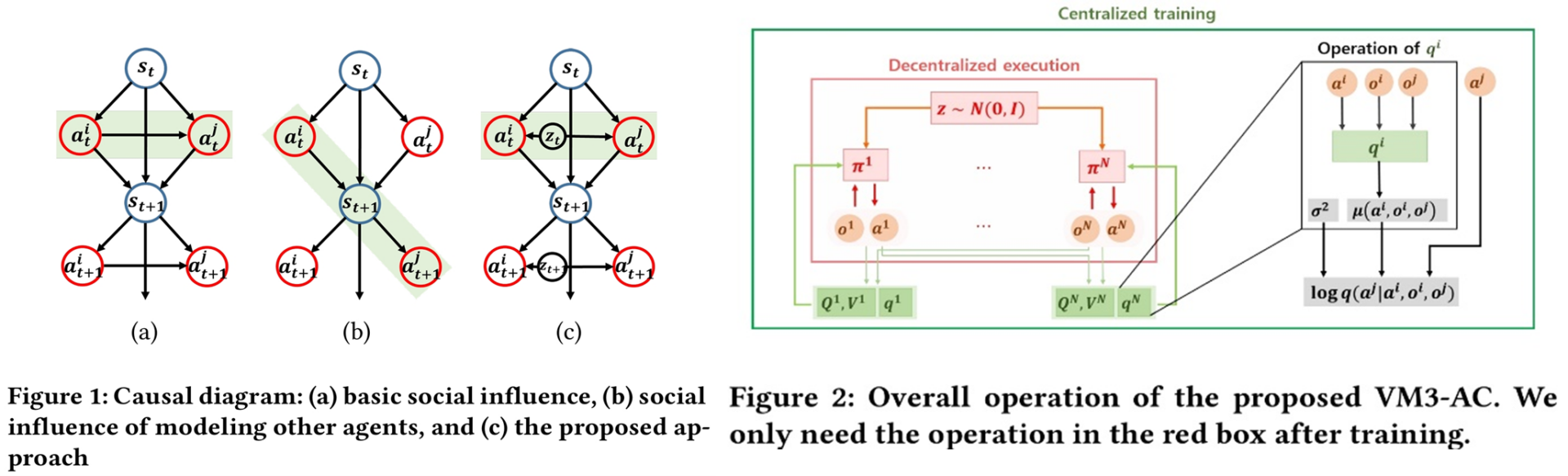

Title: A Variational Approach to Mutual Information-Based Coordination for Multi-Agent Reinforcement Learning

Venue: AAMAS 2023

Abstract: In this paper, we propose a new mutual information framework for multi-agent reinforcement learning to enable multiple agents to learn coordinated behaviors by regularizing the accumulated return with the simultaneous mutual information between multi-agent actions. By introducing a latent variable to induce nonzero mutual information between multi-agent actions and applying a variational bound, we derive a tractable lower bound on the considered MMI-regularized objective function. The derived tractable objective can be interpreted as maximum entropy reinforcement learning combined with uncertainty reduction of other agents actions. Applying policy iteration to maximize the derived lower bound, we propose a practical algorithm named variational maximum mutual information multi-agent actor-critic, which follows centralized learning with decentralized execution. We evaluated VM3-AC for several games requiring coordination, and numerical results show that VM3-AC outperforms other MARL algorithms in multi-agent tasks requiring high-quality coordination.

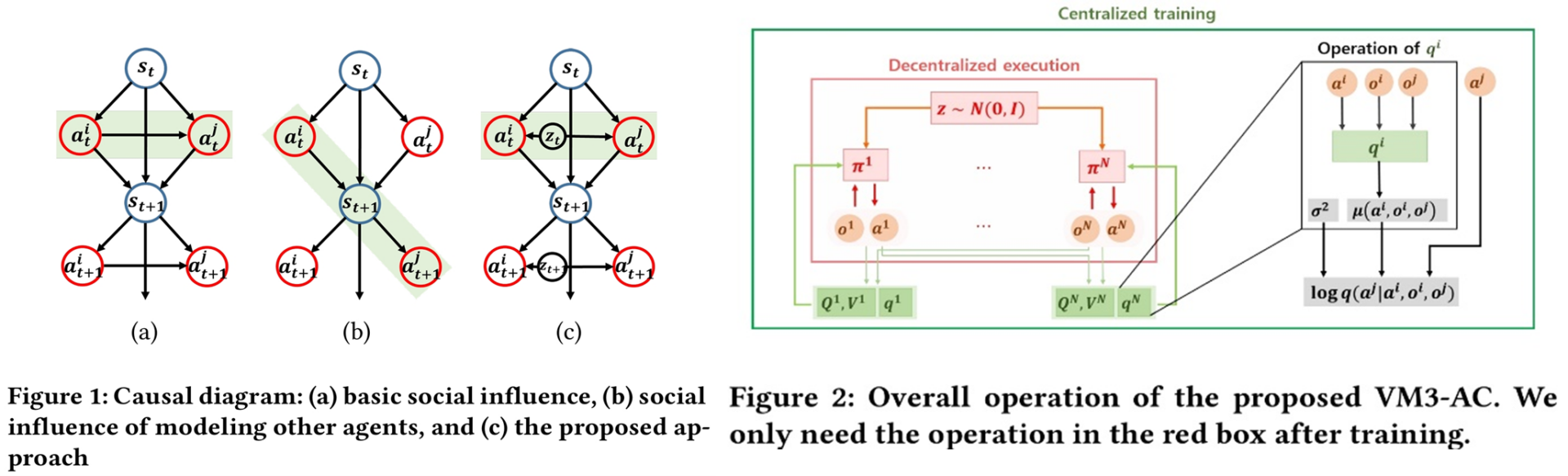

Main Figure:

Hyun-Young Park, Shahab Asoodeh, Si-Hyeon Lee, “Exactly Minimax-Optimal Locally Differentially Private Sampling,” NeurIPS 2024, Dec. 2024

Abstract: The sampling problem under local differential privacy requirements has recently been studied with potential applications to generative models, but a fundamental analysis of its privacy-utility trade-off (PUT) remains incomplete. In this work, we define the fundamental PUT of private sampling in the minimax sense, using the f-divergence between original and sampling distributions as the utility measure. We characterize the exact PUT for both finite and continuous data spaces under some mild conditions on the data distributions, and propose sampling mechanisms that are universally optimal for all f-divergences. Our numerical experiments demonstrate the superiority of our mechanisms over baselines, in terms of theoretical utilities for finite data space and of empirical utilities for continuous data space.

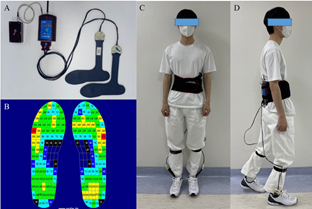

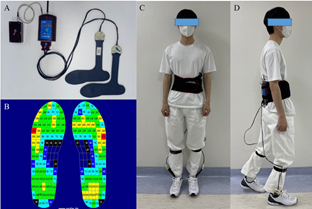

Jae-Min Park, Chang-Won Moon, Byung Chan Lee, Eungseok Oh, Juhyun Lee, Won-Jun Jang, Kang Hee Cho, Si-Hyeon Lee, “ Detection of Freezing of Gait in Parkinson’s Disease from Foot-pressure Sensing Insoles using a Temporal Convolutional Neural Network,” Frontiers in Aging Neuroscience, Jul. 2024

Abstract: Freezing of gait (FoG) is a common and debilitating symptom of Parkinson’s disease (PD) that can lead to falls and reduced quality of life. Wearable sensors have been used to detect FoG, but current methods have limitations in accuracy and practicality. In this paper, we aimed to develop a deep learning model using pressure sensor data from wearable insoles to accurately detect FoG in PD patients.

We recruited 14 PD patients and collected data from multiple trials of a standardized walking test using the pedar insole system. We proposed temporal convolutional neural network (TCNN) and applied rigorous data filtering and selective participant inclusion criteria to ensure the integrity of the dataset. We mapped the sensor data to a structured matrix and normalized it for input into our TCNN. We used a train-test split to evaluate the performance of the model.

We found that TCNN model achieved the highest accuracy, precision, sensitivity, specificity, and F1 score for FoG detection compared to other models. The TCNN model also showed good performance in detecting FoG episodes, even in various types of sensor noise situations.

We demonstrated the potential of using wearable pressure sensors and machine learning models for FoG detection in PD patients. The TCNN model showed promising results and could be used in future studies to develop a real-time FoG detection system to improve PD patients’ safety and quality of life. Additionally, our noise impact analysis identifies critical sensor locations, suggesting potential for reducing sensor numbers.

Main figure:

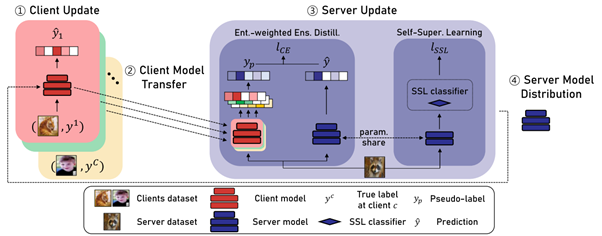

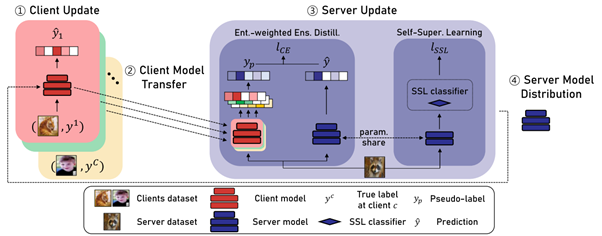

Jae-Min Park, Won-Jun Jang, Tae-Hyun Oh, Si-Hyeon Lee, “Overcoming Client Data Deficiency in Federated Learning by Exploiting Unlabeled Data on the Server,” IEEE Access, Sep. 2024

Abstract: Federated Learning (FL) is a distributed machine learning paradigm involving multiple clients to train a server model. In practice, clients often possess limited data and are not always available for simultaneous participation in FL, which can lead to data deficiency. This data deficiency degrades the entire learning process. To address this, we propose Federated learning with entropy-weighted ensemble Distillation and Self-supervised learning (FedDS). FedDS effectively handles situations with limited data per client and few clients. The key idea is to exploit the unlabeled data available on the server in the aggregating step of client models into a server model. We distill the multiple client models to a server model in an ensemble way. To robustly weigh the quality of source pseudo-labels from the client models, we propose an entropy weighting method and show a favorable tendency that our method assigns higher weights to more accurate predictions. Furthermore, we jointly leverage a separate self-supervised loss for improving generalization of the server model. We demonstrate the effectiveness of our FedDS both empirically and theoretically. For CIFAR-10, our method shows an improvement over FedAVG of 12.54% in the data deficient regime, and of 17.16% and 23.56% in the more challenging scenarios of noisy label or Byzantine client cases, respectively. For CIFAR-100 and ImageNet-100, our method shows an improvement over FedAVG of 18.68% and 15.06% in the data deficient regime, respectively.

Main figure

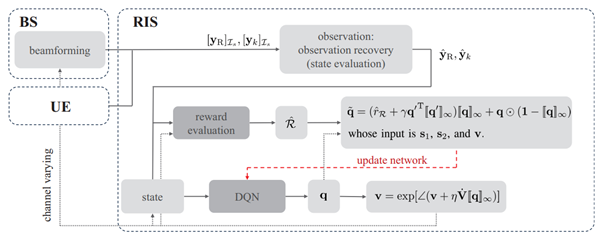

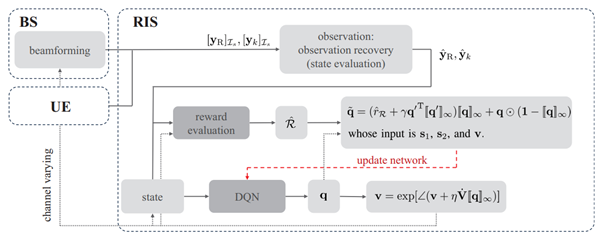

Title: A Deep Reinforcement Learning Approach for Autonomous Reconfigurable Intelligent Surfaces

Conference: IEEE International Conference on Communications Workshops (ICC) 2024

Abstract: A reconfigurable intelligent surface (RIS) is a prospective wireless technology that enhances wireless channel quality. An RIS is often equipped with passive array of elements and provides cost and power-efficient solutions for coverage extension of wireless communication systems. Without any radio frequency (RF) chains or computing resources, however, the RIS requires control information to be sent to it from an external unit, e.g., a base station (BS). The control information can be delivered by wired or wireless channels, and the BS must be aware of the RIS and the RIS-related channel conditions in order to effectively configure its behavior. Recent works have introduced hybrid RIS structures possessing a few active elements that can sense and digitally process received data. Here, we propose the operation of an entirely autonomous RIS that operates without a control link between the RIS and BS. Using a few sensing elements, the autonomous RIS employs a deep Q network (DQN) based on reinforcement learning in order to enhance the sum rate of the network. Our results illustrate the potential of deploying autonomous RISs in wireless networks with essentially no network overhead

Main Figure:

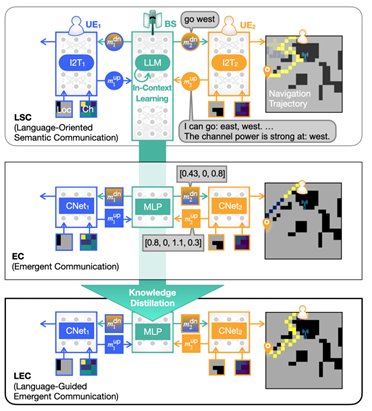

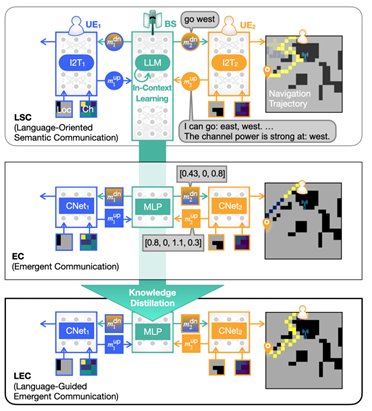

Title: Knowledge Distillation from Language-Oriented to Emergent Communication for Multi-Agent Remote Control

Conference: IEEE International Conference on Communications (ICC) 2024

Abstract: In this work, we compare emergent communication (EC) built upon multi-agent deep reinforcement learning (MADRL) and language-oriented semantic communication (LSC) empowered by a pre-trained large language model (LLM) using human language. In a multi-agent remote navigation task, with multimodal input data comprising location and channel maps, it is shown that EC incurs high training cost and struggles when using multimodal data, whereas LSC yields high inference computing cost due to the LLM’s large size. To address their respective bottlenecks, we propose a novel framework of language-guided EC (LEC) by guiding the EC training using LSC via knowledge distillation (KD). Simulations corroborate

that LEC achieves faster travel time while avoiding areas with poor channel conditions, as well as speeding up the MADRL training convergence by up to 61.8% compared to EC.

Main Figure:

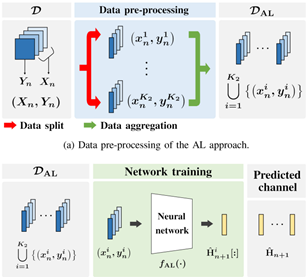

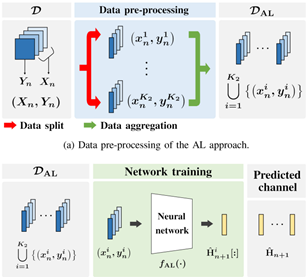

Title: Machine Learning-based Channel Prediction in Wideband Massive MIMO Systems with Small Overhead for Adaptive Online Training

Journal: IEEE Open Journal of the Communications Society

Abstract: Channel prediction compensates for outdated channel state information in multiple-input multiple-output (MIMO) systems. Machine learning (ML) techniques have recently been implemented to design channel predictors by leveraging the temporal correlation of wireless channels. However, most ML-based channel prediction techniques have only considered offline training when generating channel predictors, which can result in poor performance when encountering channel environments different from the ones they were trained on. To ensure prediction performance in varying channel conditions, we propose an online re-training framework that trains the channel predictor from scratch to effectively capture and respond to changes in the wireless environment. The training time includes data collection time and neural network training time, and should be minimized for practical channel predictors. To reduce the training time, especially data collection time, we propose a novel ML-based channel prediction technique called aggregated learning (AL) approach for wideband massive MIMO systems. In the proposed AL approach, the training data can be split and aggregated either in an array domain or frequency domain, which are the channel domains of MIMO-OFDM systems. This processing can significantly reduce the time for data collection. Our numerical results show that the AL approach even improves channel prediction performance in various scenarios with small training time overhead.

Main Figure:

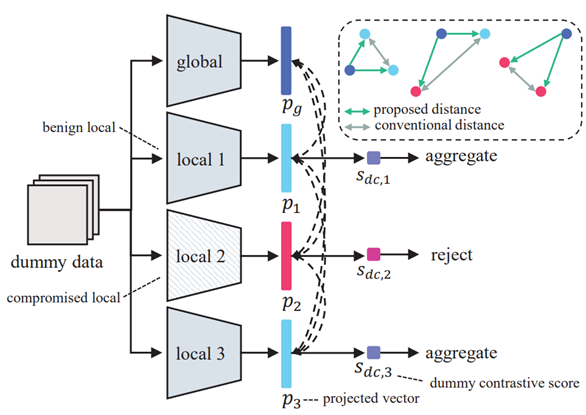

Title: Security-Preserving Federated Learning via Byzantine-Sensitive Triplet Distance

Venue: 2024 IEEE International Symposium on Biomedical Imaging (ISBI)

Abstract: While being an effective framework of learning a shared model across multiple edge devices, federated learning (FL) is generally vulnerable to Byzantine attacks from adversarial edge devices. While existing works on FL mitigate such compromised devices by only aggregating a subset of the local models at the server side, they still cannot successfully ignore the outliers due to imprecise scoring rule. In this paper, we propose an effective Byzantine-robust FL framework, namely dummy contrastive aggregation, by defining a novel scoring function that sensitively discriminates whether the model has been poisoned or not. Key idea is to extract essential information from every local models along with the previous global model to define a distance measure in a manner similar to triplet loss. Numerical results validate the advantage of the proposed approach by showing improved performance as compared to the state-of-the-art Byzantine- resilient aggregation methods, e.g., Krum, Trimmed-mean, and Fang.

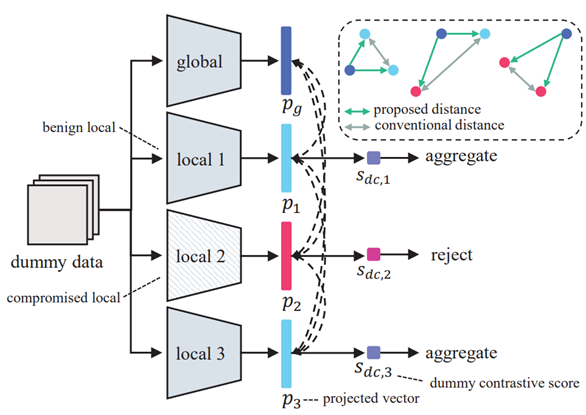

Main Figure:

Title: On the Temperature of Bayesian Graph Neural Networks for Conformal Prediction

Venue: NeurIPS 2023 New Frontiers in Graph Learning Workshop

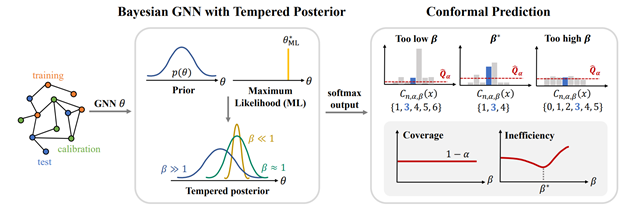

Abstract: Accurate uncertainty quantification in graph neural networks (GNNs) is essential, especially in high-stakes domains where GNNs are frequently employed. Conformal prediction (CP) offers a promising framework for quantifying uncertainty by providing valid prediction sets for any black-box model. CP ensures formal probabilistic guarantees that a prediction set contains a true label with a desired probability. However, the size of prediction sets, known as inefficiency, is influenced by the underlying model and data generating process. On the other hand, Bayesian learning also provides a credible region based on the estimated posterior distribution, but this region is well-calibrated only when the model is correctly specified. Building on a recent work that introduced a scaling parameter for constructing valid credible regions from posterior estimate, our study explores the advantages of incorporating a temperature parameter into Bayesian GNNs within CP framework. We empirically demonstrate the existence of temperatures that result in more efficient prediction sets. Furthermore, we conduct an analysis to identify the factors contributing to inefficiency and offer valuable insights into the relationship between CP performance and model calibration.

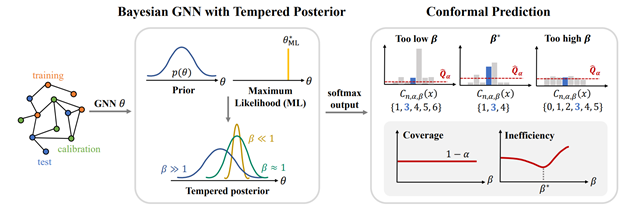

Main Figure: