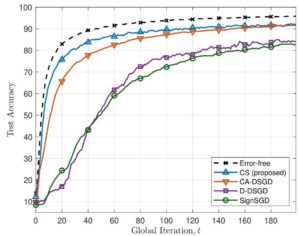

Abstract: Federated learning (FL) is an actively studied training protocol for distributed artificial intelligence (AI). One of the challenges for the implementation is a communication bottleneck in the uplink communication from devices to FL server. To address the issue, many researches have been studied on the improvement of communication efficiency. In particular, analog transmission for the wireless implementation provides a new opportunity allowing whole bandwidth to be fully reused at each device. However, it is still necessary to compress the parameters to the allocated communication bandwidth despite the communsication efficiency in analog FL. In this paper, we introduce the count-sketch (CS) algorithm as a compression scheme in analog FL to overcome the limited channel resources. We develop a more communication-efficient FL system by applying CS algorithm to the wireless implementation of FL. Numerical experiments show that the proposed scheme outperforms other bench mark schemes, CA-DSGD and state-of-theart digital schemes. Furthermore, we have observed that the proposed scheme is considerably robust against transmission power and channel resources.