Title:

Deep Reinforcement Learning-based Channel-flexible Equalization Scheme: An Application to High Bandwidth Memory (DesignCon2022)

Authors:

Seonguk Choi, Minsu Kim, Hyunwook Park, Haeyeon Rachel Kim, Joonsang Park, Jihun Kim, Keeyoung Son, Seongguk Kim, Keunwoo Kim, Daehwan Lho, Jiwon Yoon, Jinwook Song, Kyungsuk Kim, Jonggyu Park and Joungho Kim.

Abstract:

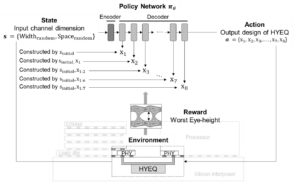

In this paper, we propose a channel-flexible hybrid equalizer (HYEQ) design methodology with re-usability based on deep reinforcement learning (DRL). Proposed method suggests the optimized HYEQ design for arbitrary channel dimension. HYEQ is comprised of a continuous time linear equalizer (CTLE) for high-frequency boosting and passive equalizer (PEQ) for low frequency attenuation, and our task is to co-optimize both of them. Our model plays a role as a solver to optimize the design of equalizers, while considering all signal integrity issues such as high frequency attenuation, crosstalk and so on.

Our method utilizes recursive neural network commonly employed in natural language processing (NLP), in order to design HYEQ based on constructive DRL. Thus, each parameter of the equalizer is designed sequentially, reflecting other parameters. In this process, the design space of machine learning (ML) is determined by applying domain knowledge of equalizer, and thus even precise optimization is conducted. Furthermore, fast inference is conducted by trained neural network for any channel dimension. We validate that the proposed method outperforms conventional optimization algorithms such as random search (RS) and genetic algorithm (GA) in 3-coupled channel system of next generation high-bandwidth memory (HBM).