Title : Reinforcement-Learning-Based Signal Integrity Optimization and Analysis of a Scalable 3-D X-Point Array Structure

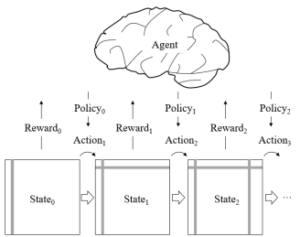

Abstract : In this article, we, for the first time, propose a reinforcement learning (RL) model to design an optimal 3-D cross-point (X-Point) array structure considering signal integrity issues. The interconnection design problem is modeled to the Markov decision process (MDP). The proposed RL model designs the 3-D X-Point array structure based on three reward factors: the number of bits, the crosstalk, and the IR drop. We applied multilayer perceptron (MLP) and long short-term memory (LSTM) to parameterize the policy. Proximal policy optimization (PPO) is used to optimize the parameters to train the policy. The reward of the proposed RL model is well-converged with variations in the array structure size and hyperparameters of the reward factors. We verified the scalability and sensitivity of the proposed RL model. With the optimal 3-D X-Point array structure design, we analyzed the reward factor and signal integrity issues. The optimal design of the 3-D X-Point array structure shows 17%–26.5% better signal integrity performance than the conventional design in finer process technology. In addition, we suggest a range of possible directions for improvement of the proposed model with variations in MDP tuples, reward factors, and learning algorithms, among other factors. Using the proposed model, we can easily design an optimal 3-D X-Point array structure with a certain size, performance capabilities, and specifications based on reward factors and hyperparameters.