Title : Design of Processing-in-Memory with Triple Computational Path and Sparsity Handling for Energy-Efficient DNN Training

Authors : Wontak Han, Jaehoon Heo, Junsoo Kim, Sukbin Lim, Joo-Young Kim

Publications : IEEE Journal on Emerging and Selected Topics in Circuits and Systems (JETCAS), 2022

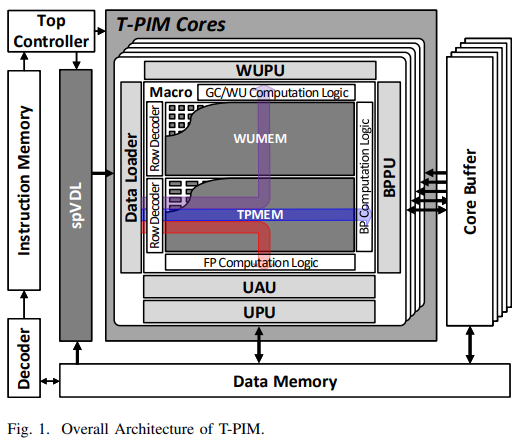

As machine learning (ML) and artificial intelligence (AI) have become mainstream technologies, many accelerators have been proposed to cope with their computation kernels. However, they access the external memory frequently due to the large size of deep neural network model, suffering from the von Neumann bottleneck. Moreover, as privacy issue is becoming more critical, on-device training is emerging as its solution. However, on-device training is challenging because it should perform the training under a limited power budget, which requires a lot more computations and memory accesses than the inference. In this paper, we present an energy-efficient processing-inmemory (PIM) architecture supporting end-to-end on-device training named T-PIM. Its macro design includes an 8T-SRAM cell-based PIM block to compute in-memory AND operation and three computational datapaths for end-to-end training. Each of three computational paths integrates arithmetic units for forward propagation, backward propagation, and gradient calculation and weight update, respectively, allowing the weight data stored in the memory stationary. T-PIM also supports variable bit precision to cover various ML scenarios. It can use fully variable input bit precision and 2-bit, 4-bit, 8-bit, and 16-bit weight bit precision for the forward propagation and the same input bit precision and 16-bit weight bit precision for the backward propagation. In addition, T-PIM implements sparsity handling schemes that skip the computation for input data and turn off the arithmetic units for weight data to reduce both unnecessary computations and leakage power. Finally, we fabricate the T-PIM chip on a 5.04mm2 die in a 28-nm CMOS logic process. It operates at 50–280MHz with the supply voltage of 0.75–1.05V, dissipating 5.25–51.23mW power in inference and 6.10-37.75mW in training. As a result, it achieves 17.90–161.08TOPS/W energy efficiency for the inference of 1-bit activation and 2-bit weight data, and 0.84–7.59TOPS/W for the training of 8-bit activation/error and 16-bit weight data. In conclusion, T-PIM is the first PIM chip that supports end-to-end training, demonstrating 2.02 times performance improvement over the latest PIM that partially supports training.