Conference/Journal, Year: ECCV, 2020

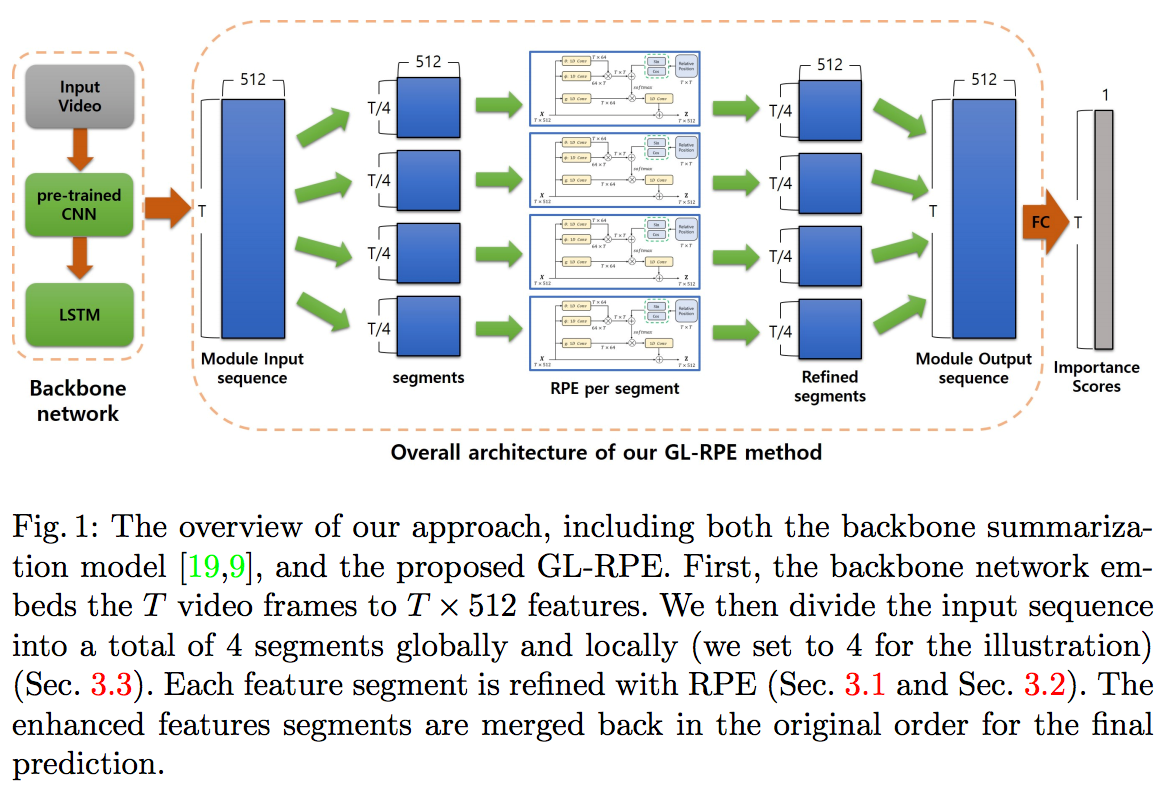

In order to summarize a content video properly, it is important to grasp the sequential structure of video as well as the long-term dependency between frames. The necessity of them is more obvious, especially for unsupervised learning. One possible solution is to utilize a well-known technique in the field of natural language processing for long-term dependency and sequential property: self-attention with relative position embedding (RPE). However, compared to natural language processing, video summarization requires capturing a much longer length of the global context. In this paper, we therefore present a novel input decomposition strategy, which samples the input both globally and locally. This provides an effective temporal window for RPE to operate and improves overall computational efficiency significantly. By combining both Global-and-Local input decomposition and RPE together, we come up with GL-RPE. Our approach allows the network to capture both local and global interdependencies between video frames effectively. Since GL-RPE can be easily integrated into the existing methods, we apply it to two different unsupervised backbones. We provide extensive ablation studies and visual analysis to verify the effectiveness of the proposals. We demonstrate our approach achieves new state-of-the-art performance using the recently proposed rank order-based metrics: Kendall’s τ and Spearman’s ρ. Furthermore, despite our method is unsupervised, we show ours perform on par with the fully-supervised method.