Sihaeng Lee, Janghyeon Lee, Byungju Kim, Eojindl Yi and Junmo Kim

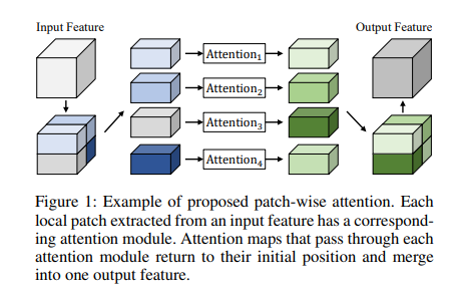

In computer vision, monocular depth estimation is the problem of obtaining a high-quality depth map from a two-dimensional image. This map provides information on three-dimensional scene geometry, which is necessary for various applications in academia and industry, such as robotics and autonomous driving. Recent studies based on convolutional neural networks achieved impressive results for this task. However, most previous studies did not consider the relationships between the neighboring pixels in a local area of the scene. To overcome the drawbacks of existing methods, we propose a patch-wise attention method for focusing on each local area. After extracting patches from an input feature map, our module generates attention maps for each local patch, using two attention modules for each patch along the channel and spatial dimensions. Subsequently, the attention maps return to their initial positions and merge into one attention feature. Our method is straightforward but effective. The experimental results on two challenging datasets, KITTI and NYU Depth V2, demonstrate that the proposed method achieves significant performance. Furthermore, our method outperforms other state-of-the-art methods on the KITTI depth estimation benchmark.