Title: Deep Reinforcement Learning Framework for Optimal Decoupling Capacitor Placement on General PDN with an Arbitrary Probing Port (EPEPS 2021)

Authors: Haeyeon Kim, Hyunwook Park, Minsu Kim, Seonguk Choi, Jihun Kim, Joonsang Park, Seongguk Kim, Subin Kim and Joungho Kim.

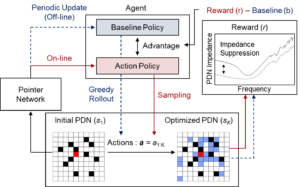

Abstract: This paper proposes a deep reinforcement learning (DRL) framework that learns a reusable policy to find the optimal placement of decoupling capacitors (decaps) on power distribution network (PDN) with an arbitrary probing port. The proposed DRL framework trains a policy parameterized by pointer network, which is a sequence-to-sequence neural network, based on REINFORCE algorithm. The policy finds the positional combination of a pre-defined number of decaps that best suppresses self-impedance of a given probing port on PDN with randomly assigned keep-out regions. Verification was done by allocating 20 decaps on ten randomly generated test sets with an arbitrary probing port and randomly selected keep-out regions. Performance of the policy generated by the proposed DRL framework was evaluated based on the magnitude of probing port self-impedance suppression followed by decap placement over 434 frequencies between 100MHz and 20GHz. The policy generated by the proposed framework achieves greater impedance suppression with fewer samples in comparison to random search heuristic method.