Imitate Expert Policy and Learn Beyond: A Practical PDN Optimizer by Imitation Learning (DesignCon 2022, nominated for best paper award & Early career best paper award finalist)

Authors: Haeyeon Kim, Minsu Kim, Seonguk Choi, Jihun Kim, Joonsang Park, Keeyoung Son, Hyunwook Park, Subin Kim and Joungho Kim

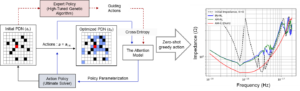

Abstract: This paper proposes a practical and reusable decoupling capacitor (decap) placement solver using the attention model-based imitation learning (AM-IL). The proposed AM-IL framework imitates an expert policy by using pre-collected guiding datasets and trains a policy that outperforms the performance beyond the existing machine learning methods. The trained policy has reusability in terms of PDN with different probing port and keep-out regions; the constructed policy itself becomes the decap placement solver. In this paper, genetic algorithm is taken as an expert policy to verify how the proposed method generates a solver that learns beyond the level of the expert policy. The expert policy for imitation learning can be substituted by any algorithm or conventional tool, which means this is a fast and effective approach to improve existing methods. Moreover, by taking the existing data from the industry as guiding data or human experts as an expert policy, the proposed method can construct a reusable decap placement solver that is data-efficient, practical and guarantees a promising performance. This paper presents verification of AM-IL in comparison to two neural combinatorial optimization networks-based deep reinforcement learning methods, AM-RL and Ptr-RL. As a result, AM-IL achieved a performance score of 11.72, while AM-RL achieved 10.74 and Ptr-RL achieved 9.76. Unlike meta-heuristic methods such as genetic algorithm that require numerous iterations to find a near-optimal solution, the proposed AM-IL generates a near-optimal solution to any given problem by a single trial.