EE Prof. Minsoo Rhu and Prof. Min Seok Jang, “Young Leaders to Lead the Development of Science and Technology” National Academy of Science and Technology, elected members of ‘2023 Y-KAIST’.

[Prof. Joungho Kim, Hyunwook Park, from left]

-Award Name: Best Poster Award

-Paper Title: Scalable Transformer Network-based Reinforcement Learning Method for PSIJ Optimization in HBM

-Authors: Hyunwook Park, Taein Shin, Seongguk Kim, Daehwan Lho, Boogyo Sim, Jinouk Song, Kyu-Bong, and Joungho Kim (Corresponding author)

-Conference Name: 2022 IEEE 31th Conference on Electrical Performance of Electronic Packaging and Systems

-Time of the event: 9 to 12th October, 2022 at San Jose, CA, USA

KAIST EE Postdoc researcher Hyunwook Park (under the supervision of Professor Joungho Kim) won the Best Poster Award at 2022 IEEE 31th Conference on Electrical Performance of Electronic Packaging and Systems (EPEPS Conference), which was held at San Jose, California, from 9 to 12th October.

EPEPS Conference is an annual academic conference in which many prestigious universities and companies share their research works in the field of signal and power integrity-based semiconductor.

Postdoc researcher Researcher Hyunwook Park presented the paper “Scalable Transformer Network-based Reinforcement Learning Method for PSIJ Optimization in HBM”, which was nominated for the Best Poster Award thanks to its excellence.

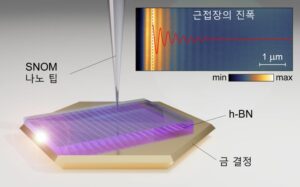

KAIST EE Prof. Jang, Min Seok and his research team succeed in observing strongly confined mid-infrared light propagating on monocrystalline gold with a scattering-type scanning near-field optical microscope (s-SNOM).

It is highly applicable in next-generation optoelectronic devices development, with increasing the interaction between light and matter by confining the light in an atomically flat nanostructure. The study will be useful in advancing high-efficient nanophotonics and quantum computing.

[From left, Prof. Jang, Min Seok and Research Prof. Sergey Menabde]

KAIST announced on the July 18th that a joint research effort has succeeded in implementing a new platform for strongly confined light propagation in a low-dimensional material. This finding is expected to contribute to the development of next-generation optoelectronic devices development based on strong light-matter interactions.

Stacking atomically flat two-dimensional materials results in van der Waals crystals, which exhibit properties different from the original two-dimensional materials. Phonon-polaritons are the composite quasi-particles resulting from the polar dielectric ions’ oscillations coupling with electromagnetic waves. In particular, phonon-polaritons forming in van der Waals crystals placed on highly conductive metals have a high degree of compression. This is because the charges in the polariton crystals reflect in the underneath metal due to the image charge effect, and thus produce a new kind of polariton called image phonon-polaritons.

Light propagating as image phonon-polaritons may induce strong light-matter interactions, but their propagation is limited on rough metal surfaces and thus suffers low feasibility.

Prof. Jang said, “This work illustrates very well the advantages of image polaritons, especially image phonon-polaritons. Image phonon-polaritons exhibit low loss and strong light-matter interactions useful in the development of next-generation optoelectronic devices.” He then added that he hopes to advance the commercialization of high-efficiency nanophotonic devices, including in metasurfaces, optical switches, and optical sensors.

This work, first-authored by Research Prof. Sergey Menabde, was published in Science Advances on the July 13th. It has been supported by Samsung Science & Technology Foundation and the National Research Foundation of Korea, as well as Korea Institute of Science and Technology, Japanese Ministry of Education, Culture, Sports, Science and Technology, and the Villum Foundation of Denmark.

Fig. 1 Nanotip used in measuring the image phonon-polaritons traveling in h-BN in super-high resolution

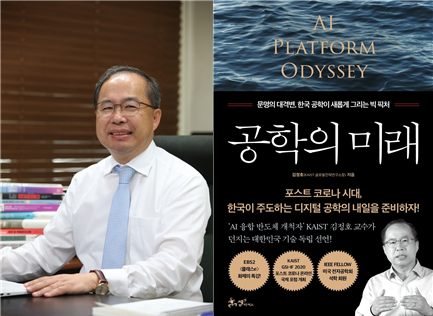

‘The Future of Engineering’ has been published, which is written by Professor Joung-Ho Kim of our faculty.

This is a new book that contains that this is the best time to move forward to become a ‘First Mover’ in the era of the 4th industrial revolution in Korea, even in the chaotic situation of the post-corona era.

The professor described a discourse on the role of Korean engineering to achieve true digital technology independence.

Against the rapidly changing environment, he expressed his position that we should take the lead in overcoming the crisis caused by COVID-19 by focusing on ‘digital engineering.’

In particular, the book explained the basic principles of artificial intelligence, big data, computers, and semiconductors that are rapidly developing in the 4th industrial revolution. The book suggests the directions of future innovative development and golden opportunities. It also explained the principle of mathematics, which is the basis of digital engineering, in a refreshingly readable manner.

In addition, this book presents the future of the 4th industrial revolution that will unfold after COVID-19, the development strategy of Korea, also including the concept of talents who will lead the future.

Our university’s AI quantum computing IT research centers has announced last September 29th that it will be joining the IBM Q-network for developing quantum computing used in business and science. The IBM Q-network is a multi corporation, start-up, and research institute collaboration working with IBM.

As the first domestic academic member of the IBM Q-network, our university will use the highly advanced IBM’s quantum computing system to conduct research products for the development of quantum information science and early stage application usage. The vast IBM quantum resources will be also used for training professional quantum information related experts for the upcoming quantum computing era. Our university will take the lead for developing the quantum computing infrastructure, which is one the key technologies for the 4th industrial revolution.

Professor June-Koo Rhee, the head of the AI quantum computing IT research center, explains the quantum computing is a new technology that can solve difficult mathematic problems in a short time with low power and can reshape the future. He also mentioned that although our country is behind in quantum computing technologies, KAIST’s IBM Q-network will become an important resource for developing national competitiveness.

Our university’s A quantum computing IT research center have been using the IBM cloud open source IBM quantum experience for quantum AI, quantum chemistry calculation, quantum algorithm research and quantum computing education. Our university will be able to use IBM’s high end quantum computer for quantum AI based disease diagnosis, quantum chemical computer science, quantum machine learning, and other quantum related research and experiments. Also we will be able to communicate with other universities and corporations participating in the IBM Q-network.

About IBM Quantum

The IBM Quantum is an initiative for founding quantum systems for business and science applications. More can be found on http://www.ibm.com/ibmq. More information about the IBM Q-network with all partners, members, and hubs are listed in https://www.research.ibm.com/ibm-q/network/.

Professor June-Koo Rhee’s research team developed a non-linear quantum machine-learning artificial intelligence algorithm through collaborative research with German and South African research teams.

Through this study, a non-linear kernel was devised to enable quantum machine learning of complex data. In particular, the quantum supervised learning algorithm developed by Professor June-Koo Rhee’s research team can be calculated with a minimal amount of computation. Therefore, the algorithm presents the possibility of overtaking current AI technologies that require large amounts of computation.

Professor June-Koo Rhee’s research team developed quantum forking technology that generates train and test data through quantum information and enables parallel computation of quantum information. A simple quantum measurement technique has been combined to create a quantum algorithm system that implements non-linear kernel-based supervised learning that efficiently calculates similarities between quantum data. The research team successfully demonstrated quantum supervised learning on real quantum computers through IBM cloud services. Research professor Kyung-Deock Park (KAIST) participated as the first author. The result of this study was published in the 6th volume of May 2020, ‘npj Quantum Information’, a sister journal of the international journal Nature. (Title: Quantum classifier with tailored quantum kernel).

Furthermore, the research team theoretically proved that it is possible to implement various quantum kernels through the systematic design of quantum circuits. In kernel-based machine learning, the optimal kernel may vary depending on the given input data. Therefore, being able to implement various quantum kernels efficiently is a significant achievement in the practical application of quantum kernel-based machine learning.

Research professor Kyung-Deock Park said, “The kernel-based quantum machine learning algorithm developed by the research team will surpass traditional kernel-based supervised learning in the era of hundreds of qubits of Noisy Intermediate-Scale Quantum (NISQ) computing, which is expected to be commercialized in the next few years. The developed algorithm will be actively used as a quantum machine learning algorithm for pattern recognition of complex non-linear data.”

Meanwhile, this research was carried out with the support of the Korea Research Foundation’s Creative Challenge Research Foundation Support Project, the Korea Research Foundation’s Korea-Africa Cooperation Foundation Project, and the Information and Communication Technology Expert Training Project (ITRC) supported by the Institute for Information and Communications Technology Promotion.

You can find information on related articles in the link below.

Congratulations again on Professor June-Koo Rhee’s research team for their outstanding performance in the field of quantum computing.

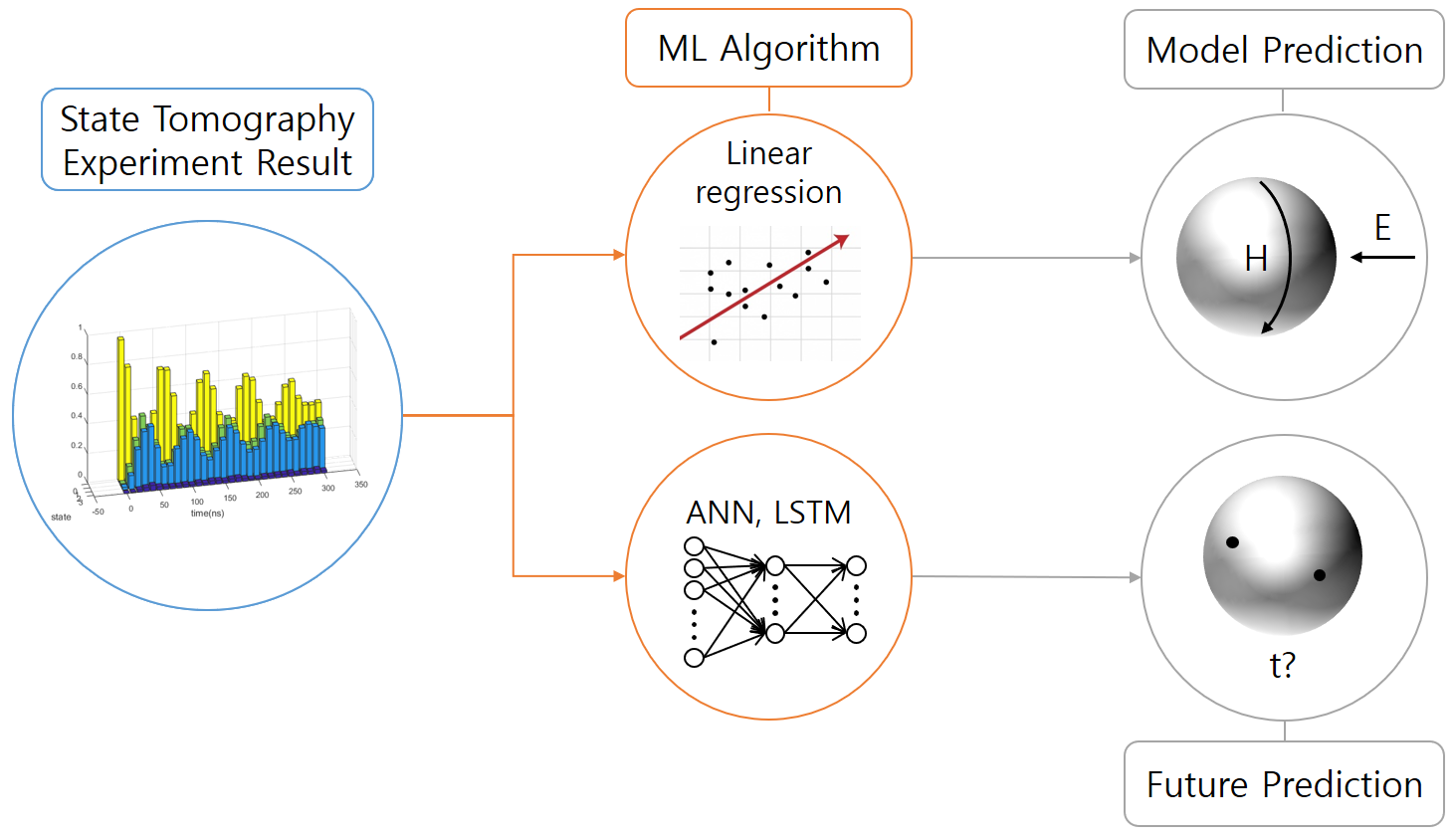

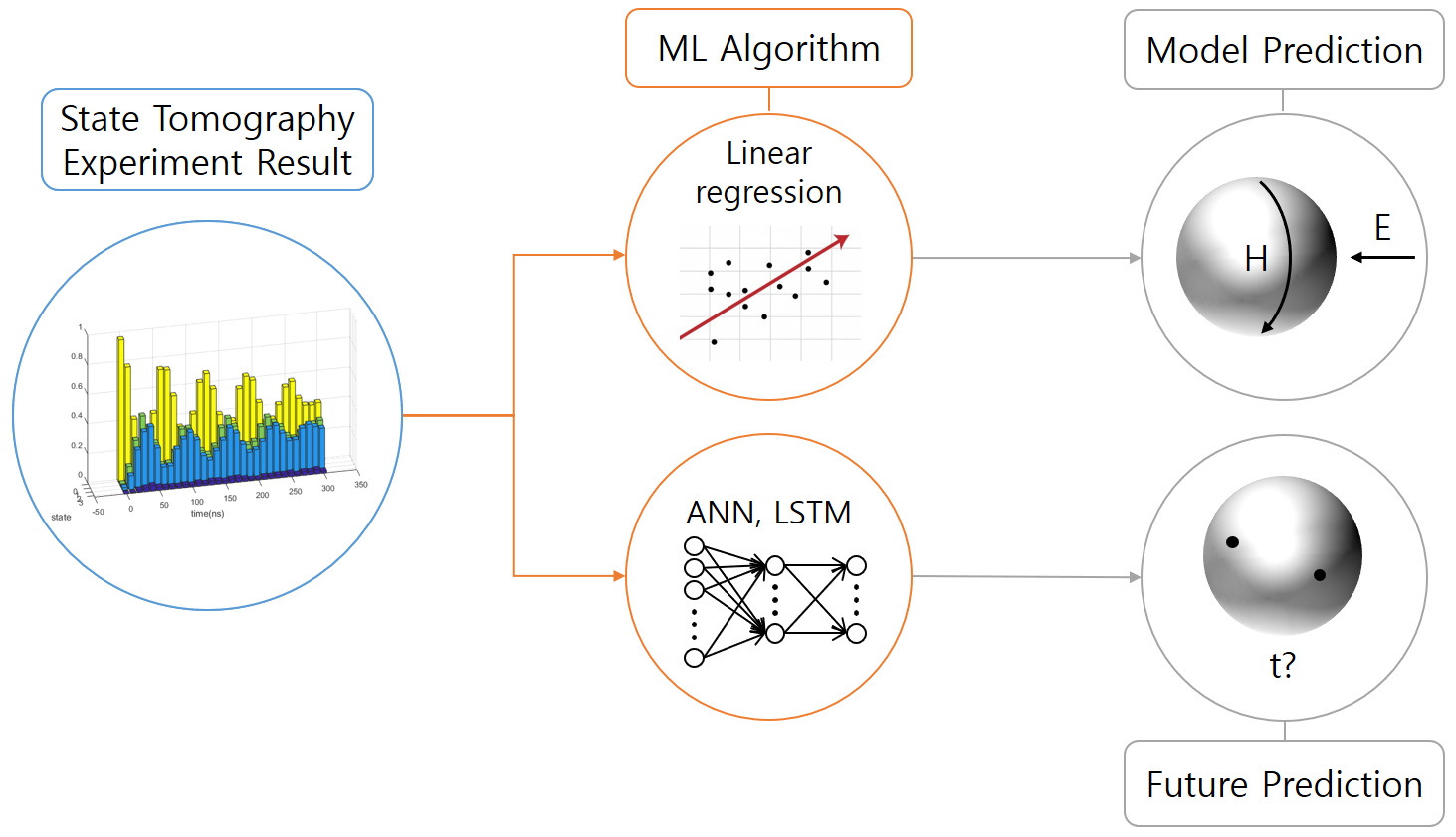

Title: Quantum tomography via classical machine learning

Authors: Changjun Kim, Daniel Kyungdeock Park, June-Koo Kevin Rhee

Determination of a wave function or a density matrix of a quantum system and/or its dynamics is of fundamental importance in quantum information science. Unfortunately, the computational cost of full quantum state and process tomography grow exponentially with the number of qubits. In this research project, we are exploring the possibilities to apply classical machine learning techniques such as linear regression and deep learning to assist quantum tomography tasks.

Signal Integrity/Power Integrity Design for AI Computing Hardware

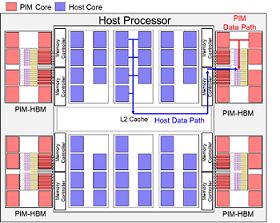

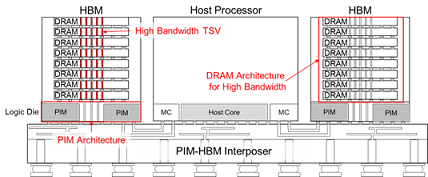

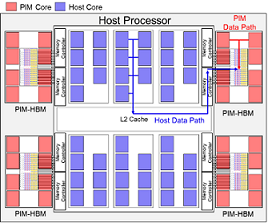

1. Signal Integrity/Power Integrity Design of Energy-efficient Processing-in-memory in High Bandwidth Memory (PIM-HBM) Architecture to Accelerate AI Applications

Fig. 1. Conceptual view of heterogeneous PIM-HBM architecture

As the demand on high computing performance for artificial intelligence is increasing, parallel processing accelerators are the key factor of system performance. The important feature of these accelerator is high DRAM bandwidth required. That mean DRAM access occurs more frequently. In addition, the energy of DRAM access is about 200 times one 32bit floating-point operation and this gap increases with transistor scaling. In aspect of accelerator, the number of cores is continuously increasing, which requires more off-chip memory bandwidth and area. As a result, it not only increases the energy consumed by interconnection, but also limits system performance by insufficient off-chip memory bandwidth. In order to overcome the limitation, Processing-In-Memory (PIM) architecture is re-emerged. PIM architecture is the integration of processing units with memory, which can be implemented by 3D-stack high bandwidth memory (HBM).

Our lab’s AI hardware group focused on the optimized design of PIM-HBM architecture and interconnection considering signal integrity (SI) / power integrity (PI). In order to provide high memory bandwidth to the PIM core using through silicon via (TSV), area or data rates of TSV should be increased. However, more than 30% of DRAM area is already occupied by TSV, and data rates of TSV is determined by SI. Therefore, optimal design of TSV should be essential for small area and high bandwidth. Also, when the number of PIM cores increases for high performance, more area of logic die is required. That mean memory bandwidth for host processor is decreased by increased interposer channel length. Consequently, design of PIM-HBM logic die and interposer channel must be optimized for system performance without degradation of interposer bandwidth. Through system level optimization as mentioned above, our PIM-HBM architecture can achieve high energy-efficiency by drastically reducing interconnection lengths and improve system performance in memory-limited applications.

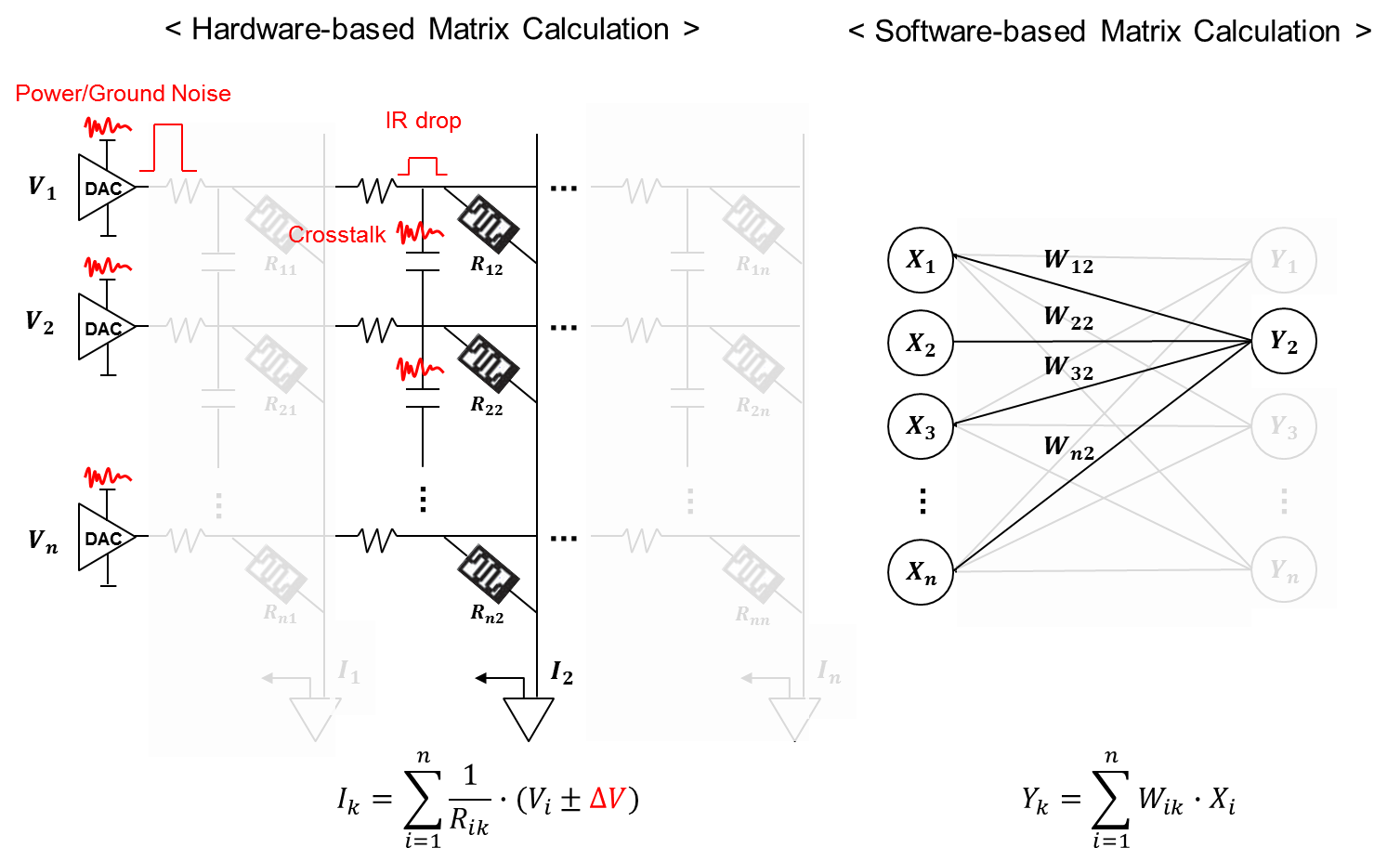

2. Signal Integrity/Power Integrity in a Memristor Crossbar Array for Neural Network Accelerator and Neuromorphic Chip

The most important part of artificial intelligence calculations is huge parallel matrix-vector multiplication. Such an operation method is inefficient in terms of calculation time and power in the conventional Von-Neumann computing architecture. This is because the data for the operation must be fetched from off-chip memory, which consumes lots of interconnection power, every clock cycle. Various AI hardware operation architectures are emerging to solve this problem. Among them, the promising structure is to integrate computation into the memory using non-volatile resistive memory. This architecture can reduce the access to off-chip memory for data fetch and calculate vector-matrix multiplication directly by reading current from the multiplication of voltage and conductance of memory as an analog computing approach. Thus, calculation for AI can be done very efficiently based on hardware structure target.

Our lab’s AI hardware group focused on the design of optimized computing architecture and interconnection considering signal integrity (SI) / power integrity (PI) for accurate hardware operation. Generally, memristor crossbar array has smaller size than the number of input neurons in a filter layer. But large-scale memristor crossbar array has serious IR drop problem, and can be more sensitive to noise such as crosstalk and ripple at high speed. In particular, it is more serious in a multi-level input calculation because of small voltage margin. We analyze SI/PI issues such as crosstalk noise between crossbar interconnection and power/ground noise that can affect to memristor resistance change and calculation. These noise can cause a large malfunction in the calculation of the small read voltage margin. Finally, we suggest design guide of memristor crossbar array for hardware AI operation.

Fig. 2. Signal Integrity/Power Integrity in a Memristor Crossbar Array for Hardware-based Matrix-Vector Multiplication

Professor Joung-Ho Kim writes weekly column articles, “Kim Joung-Ho Column on Strategies in the AI Era,” in the Opinion Section of Chosum.com. In his column series, he discloses various aspects of AI technologies in the IT industries from his insightful technical analysis and visionary insights on the future development of AI applications.

link : http://news.chosun.com/site/data/html_dir/2019/06/16/2019061602215.html

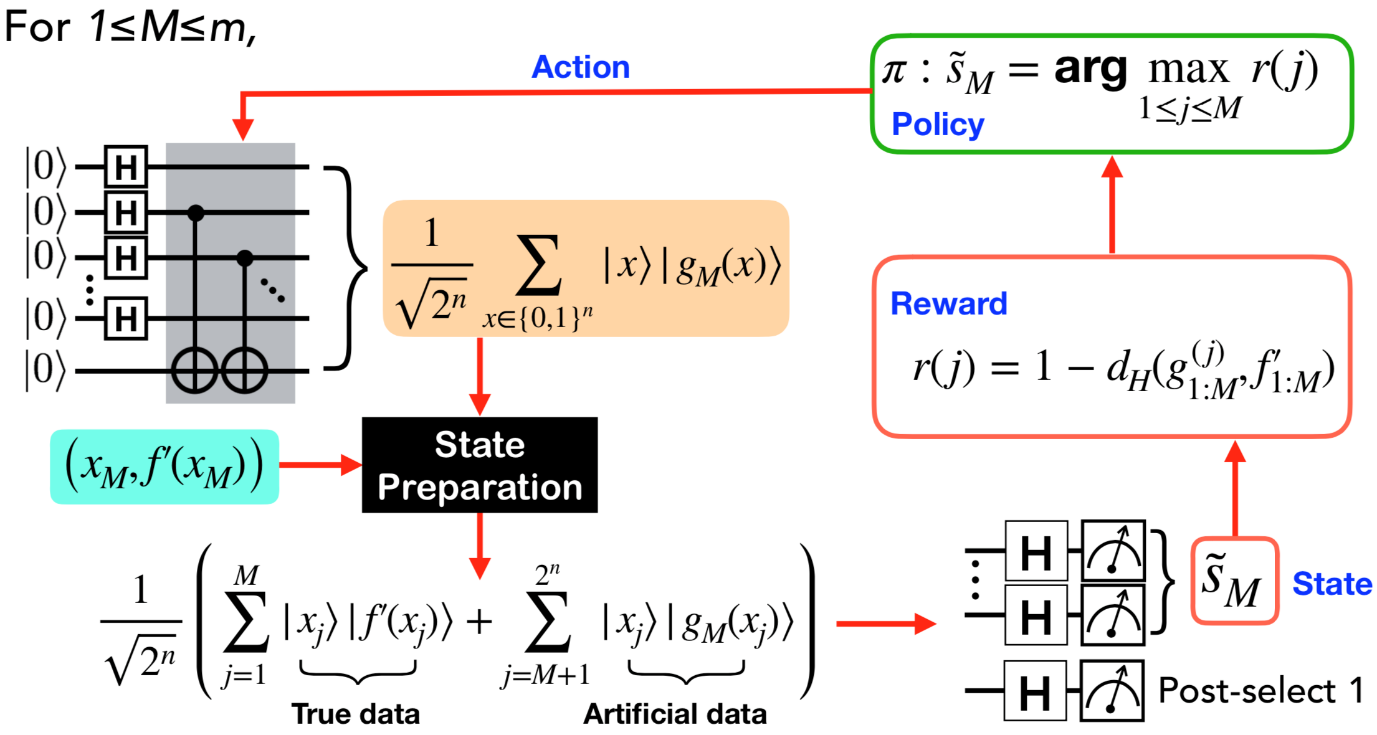

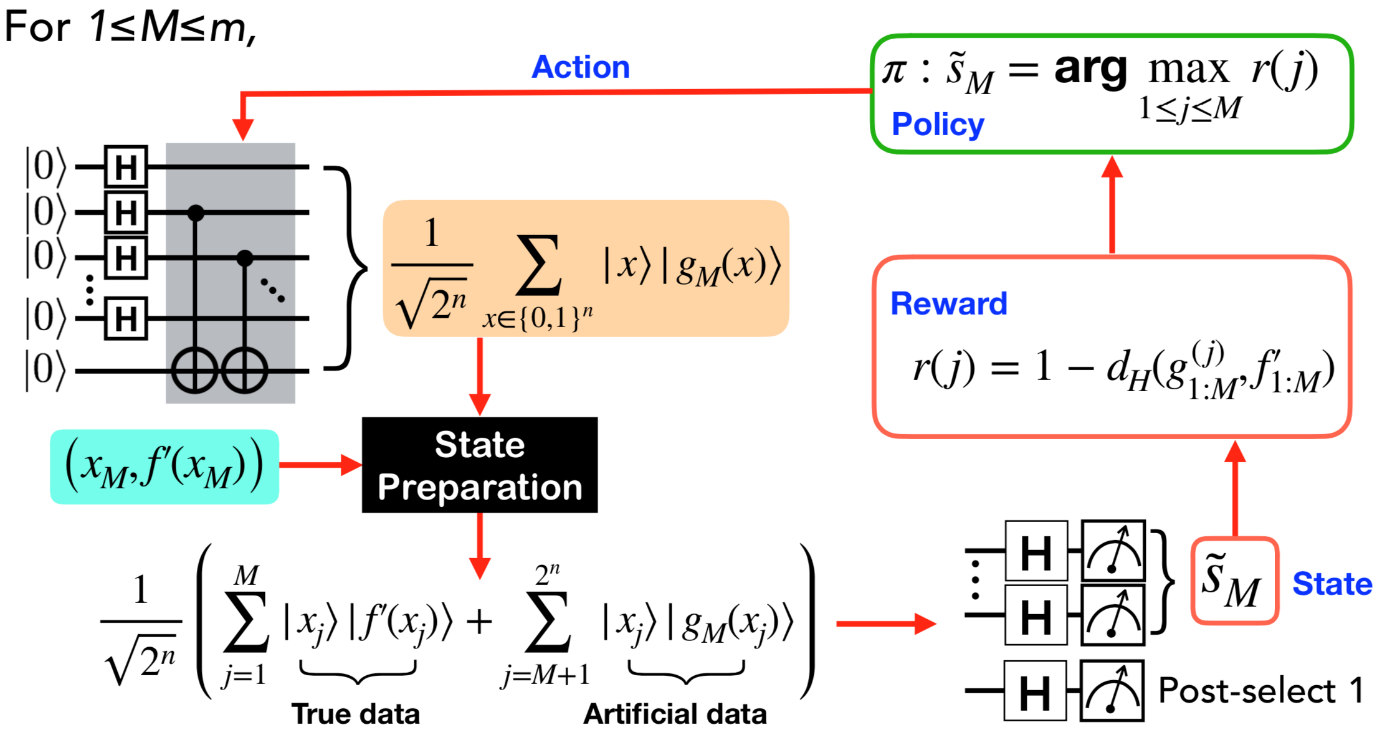

Title: Quantum-classical reinforcement learning for quantum algorithms with classical data

Authors: Daniel Kyungdeock Park, Jonghun Park, June-Koo Kevin Rhee

Many known quantum algorithms with quantum speed-ups require an existence of a quantum oracle that encodes multiple answers as quantum superposition. These algorithms are useful for demonstrating the power of harnessing quantum mechanical properties for information processing tasks. Nonetheless, these quantum oracles usually do not exist naturally, and one is more likely to work with classical data. In this realistic scenario, whether the quantum advantage can be retained is an interesting and critical open problem.

In our research group, we tackle this problem with the learning parity with noise (LPN) algorithm as an example. LPN is an example of an intelligent behavior that aims to form a general concept from noisy data. This problem is thought to be classically intractable. The LPN problem is equivalent to decoding a random linear code in the presence of noise, and several cryptographic applications have been suggested based on the hardness of this problem and its generalizations. However, the ability to query a quantum oracle allows for an efficient solution. The quantum LPN algorithm also serves as an intriguing counterexample to the traditional belief that a quantum algorithm is more susceptible to noise than classical methods. However, as noted above, in practice, a learner receives data from classical oracles. In our work, we showed that a naive application of the quantum LPN algorithm to classical data that is encoded as an equal superposition state requires an exponential sample complexity, thereby nullifying the quantum advantage.

We developed a quantum-classical hybrid algorithm for solving the LPN problem with classical examples. The underlying idea of our algorithm is to learn the quantum oracle via reinforcement learning, for which the reward is determined by comparing the output of the guessed quantum oracle and the true data, and the action is chosen via greedy algorithm. The reinforcement learning significantly reduces both the sample and the time cost of the quantum LPN algorithm in the absence of the quantum oracle. Simulations with a hidden bit string of length up to 12 show that the quantum-classical reinforcement learning performs better than known classical algorithms when the number of examples, run time, and robustness to noise are collectively considered.