Highlights

What if an AI, when asked about a minister appointed last month, returned the name of a predecessor from a year ago? This example illustrates a critical limitation of current AI systems: their inability to reliably reflect up-to-date information. Our university’s research team has developed a new evaluation framework that automatically incorporates changes in real-world information and detects “temporal errors” that can appear plausible on the surface. The study is expected to enhance AI reliability by providing a systematic benchmarking framework.

A research team led by Professor Steven Euijong Whang from the School of Electrical Engineering, in joint research with Microsoft Research, has developed a system that automatically evaluates and diagnoses the temporal reasoning capabilities of Large Language Models (LLMs) using temporal database technology.

For AI to earn users’ trust, it must be able to accurately understand real-world information that changes over time. However, existing evaluation methods have largely focused on whether answers are simply right or wrong, or have examined only a narrow set of temporal relations, making them insufficient for evaluating the wide range of question scenarios that arise in real-world environments.

To overcome this challenge, the research team integrated “Temporal Database” design theory—an approach refined and validated over the past 40 years—into AI evaluation for the first time. By leveraging the temporal dependencies and relational structure of data, the technology can automatically generate 13 types of complex time-sensitive questions directly from the database, eliminating the need for researchers to manually create evaluation questions.

In particular, this technology marks a major innovation by replacing the conventional approach of manually writing evaluation questions with a data-driven method that generates them automatically. By automating the entire process—from question generation to answer derivation and verification—based on the database, it also reduces maintenance burden by eliminating the need to manually revise evaluation items.

When real-world information changes, the evaluation questions, answers, and verification criteria are automatically updated simply by revising the relevant data in the database. Although the latest information must still be provided by external data sources or administrators, the framework is designed to automatically conduct the evaluation once the data has been updated.

Additionally, going beyond traditional methods that assess only whether a final answer is correct or incorrect, the research team introduced a new metric that evaluates the factual validity of the dates or time periods used during the answering process. Using this metric, the team achieved a 21.7% improvement in detecting “Temporal Hallucinations”—cases in which an answer appears correct on the surface but is based on faulty temporal reasoning—compared with previous methods.

The database-based approach also improved evaluation efficiency. By eliminating the reliance on unnecessary data, the research team reduced the amount of input data required by an average of 51% compared with previous methods and demonstrated its effectiveness in reducing evaluation maintenance costs.

Professor Steven Euijong Whang stated, “This research shows that classical database design theory can play a crucial role in addressing the reliability challenges of today’s AI systems. By transforming large amounts of domain-specific data into evaluation resources, we expect this work to provide a practical foundation for verifying AI performance in various fields such as medicine and law.”

Soyeon Kim, a PhD student at KAIST, participated as the lead author of this study, and Jindong Wang (Microsoft Research, currently at William & Mary) and Xing Xie (Microsoft Research) participated as co-authors. The research results will be presented this April at ICLR 2026, the most prestigious academic conference in the field of artificial intelligence.

※ Paper Title: Harnessing Temporal Databases for Systematic Evaluation of Factual Time-Sensitive Question-Answering in Large Language Models

※ Paper Link: https://arxiv.org/abs/2508.02045

Meanwhile, this research was conducted with support from Microsoft Research, the National Research Foundation of Korea, and the Institute for Information & Communications Technology Planning & Evaluation (IITP) Global AI Frontier Lab projects (RS-2024-00469482, RS-2024-00509258).

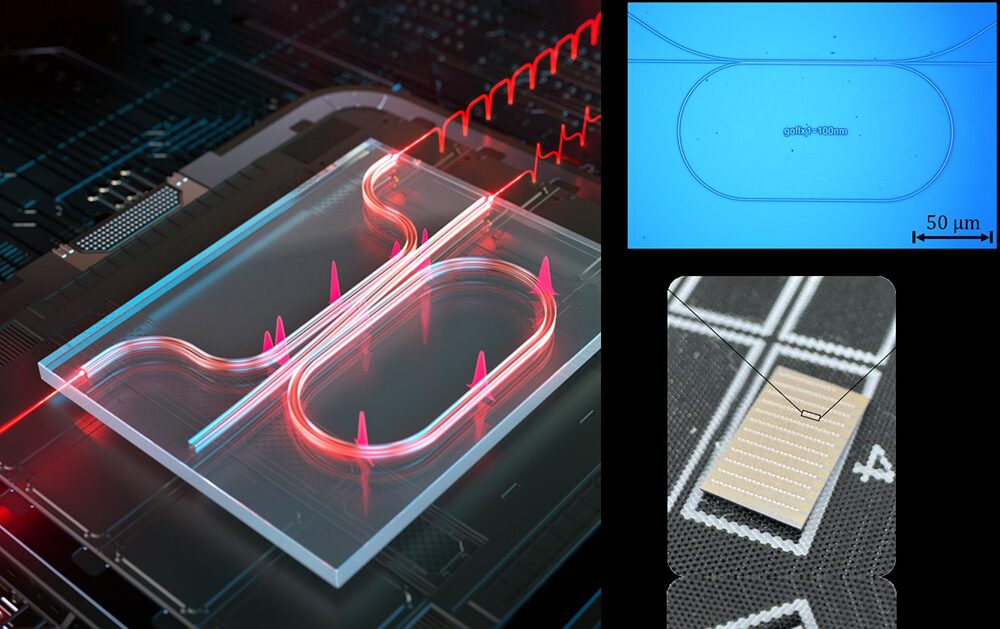

A new technology has been developed that allows light to be “designed” into desired forms, potentially making Artificial Intelligence (AI) and communication technologies faster and more accurate. A KAIST EE research team has developed an “integrated photonic resonator”—a core component of next-generation optical integrated circuits that process data using light. The research is particularly significant as it was led by an undergraduate student. This technology is expected to serve as a key foundation for next-generation security technologies such as high-speed data processing and quantum communication.

A research team led by Professor Sangsik Kim from the School of Electrical Engineering, in collaboration with Professor Jae Woong Yoon’s team from the Department of Physics at Hanyang University (President Kigeong Lee), has developed a new integrated photonic resonator structure capable of freely controlling optical signals by utilizing light interference (the phenomenon where two light waves meet and influence each other).

Photonic Integrated Circuits (PICs) process data at ultra-high speeds and with low power consumption using light. They are garnering significant attention as a fundamental platform technology for next-generation fields such as AI, data centers, and quantum information processing.

The core of this technology lies in the precision with which light can be controlled. Specifically, the ability to freely adjust the spectrum (color or wavelength distribution) and phase response (timing or wave position) of optical signals is essential for implementing high-performance optical communication and computing. However, conventional methods have faced fundamental limitations.

The integrated photonic resonator (optical resonator) focused on by the research team is a key optical device that traps light in a specific space to amplify it or select specific colors (wavelengths), similar to how the body of a musical instrument amplifies sound. However, existing single-bus resonators have had limitations in precisely adjusting the phase and spectrum of optical signals.

To overcome these challenges, the research team introduced a “dual-bus” structure. This design allows light that has passed through the resonator to recombine with light that has not, enabling precise control over interference. This allows for the free design of optical signals into desired forms, making it possible to control various types of light signals that were previously difficult to implement.

By applying this technology, the research team secured new characteristics for more precise control of wavelength properties and presented new possibilities for non-linear frequency conversion research (changing the color of light). Utilizing this technology enables faster and more accurate data processing, which is expected to provide the groundwork for performance enhancements in future high-speed data centers, AI accelerators, and quantum communication systems.

This research is especially meaningful as it was led by an undergraduate student. Taewon Kim, an undergraduate student who conducted the study through the KAIST Undergraduate Research Program (URP), stated, “I was able to develop the resonator principles I learned in the Introduction to Integrated Optics class into actual device designs and a published paper.”

Professor Sangsik Kim remarked, “This study goes beyond proposing a new device; it demonstrates that by precisely analyzing previously overlooked optical characteristics, physical limitations can be overcome. We expect this to contribute broadly to the development of optics-based AI accelerators and optical communication technologies.”

KAIST undergraduate student Taewon Kim participated as the lead author of this study, and the results were published on March 6th in the international optics journal, Laser & Photonics Reviews.

※ Paper Title: Dual-bus resonator for multi-port spectral engineering

※ DOI: 10.1002/lpor.202502935 (Authors: Taewon Kim, Mehedi Hasan, Yu Sung Choi, Jae Woong Yoon, and Sangsik Kim)

This research was supported by the KAIST URP Program, the Institute of Information & Communications Technology Planning & Evaluation (IITP), the U.S. Asian Office of Aerospace Research and Development (AOARD), and the National Research Foundation of Korea (NRF).

Instead of applying ointment and attaching a bandage, a ‘smart patch that regulates treatment intensity on its own just by being attached’ has appeared. Our university’s research team has developed a ‘self-regulating OLED wound healing patch’ that combines light and drugs to pull up the wound recovery speed by about twice. It is expected to develop into an intelligent treatment technology where light regulates drug release according to the patient’s condition in the future.

A research team led by Professor Kyung Cheol Choi of the School of Electrical Engineering, together with Dr. Daekyung Sung of the Korea Institute of Ceramic Engineering and Technology (President Jong-seok Yoon) and Professor Chan-Su Park’s team at Chungbuk National University (Acting President Yu-sik Park), developed a ‘self-regulating wound healing patch’ technology that combines Organic Light Emitting Diodes (OLED) and a Drug Delivery System (DDS).

Ointments can cause side effects when overused, and Photobiomodulation (PBM)* treatment, which helps cell regeneration using light, also had limitations in that its effect decreased if the appropriate amount was exceeded.

*PBM (Photobiomodulation): A non-invasive treatment method that promotes the recovery of cells and tissues using low-intensity light.

The research team focused on solving the limitations of existing treatment methods, which make it difficult to appropriately regulate treatment intensity. The core of this research is that ‘light regulates the medicine.’ When light is applied, Reactive Oxygen Species (ROS) are generated in the body, and this substance plays a role in stimulating nanoparticles so that drugs are released.

In other words, the amount of reactive oxygen species generated varies according to the intensity of light, and the amount of drug release is naturally regulated accordingly. When light is applied, cell regeneration is promoted, and at the same time, the ROS generated at this time acts as a ‘switch’ so that the drug is automatically released only as much as necessary. It is an ‘intelligent treatment method’ in which the treatment maintains its optimal level on its own even if a person does not regulate it separately. Simply put, it is a ‘self-regulating treatment patch’ where the medicine automatically comes out in an appropriate amount according to the intensity of the light when it is shone.

The research team produced a 630-nanometer (nm) wavelength OLED patch that closely adheres to the skin. This patch was designed to deliver light evenly to induce cell regeneration while releasing an appropriate amount of antioxidant drugs, such as Centella asiatica (commonly known as tiger grass) extract, a plant-derived ingredient well known for its skin regeneration effects.

In addition, it was produced in a wearable form that perfectly adheres to the curves of the skin to reduce light energy loss, and it maintains a temperature of about 31 degrees Celsius even during long-term use, allowing it to be used safely without the risk of low-temperature burns. Stability, maintaining performance for more than 400 hours, was also confirmed, securing the possibility of application to actual medical devices.

The effect was confirmed through experiments. In skin cell experiments, ‘combined treatment’ using light and drugs together showed faster recovery than single treatment. In mouse experiments, the wound recovery rate was 67% as of the 14th day of treatment, recording a healing speed about twice as fast as that of the control group (35%). The quality of healing was also significantly improved, such as skin thickness and barrier protein formation recovering to normal levels.

Professor Kyung Cheol Choi stated, “This research is an example of expanding OLED-based light treatment beyond the level of simply applying it to the role of regulating the treatment, and into a combined treatment platform where drug release is automatically regulated according to the wound status. We plan to develop it into an intelligent treatment technology that can be applied to various wounds and diseases and reacts on its own according to the patient’s body condition.”

In this research, Hyejeong Yeon, a doctoral student at the KAIST School of Electrical Engineering, participated as the first author. It was published online in the international academic journal ‘Materials Horizons’ last January and was selected as the Front Cover Paper in March.

※ Paper title: A self-regulating wearable OLED patch for accelerated wound healing via photobiomodulation-triggered drug delivery

※ DOI: https://doi.org/10.1039/D5MH02129D (Authors: Hyejeong Yeon, Sohyeon Yu, Minhyeok Lee, Sangwoo Kim, Yongjin Park, Hye-Ryung Choi, Won Il Choi, Chang-Hun Huh, Yongmin Jeon, Chan-Su Park, Daekyung Sung, and Kyung Cheol Choi)

This research was conducted with the support of the Future Discovery Convergence Science and Technology Development Program (2021M3C1C3097646) carried out through the National Research Foundation of Korea (NRF) of the Ministry of Science and ICT.

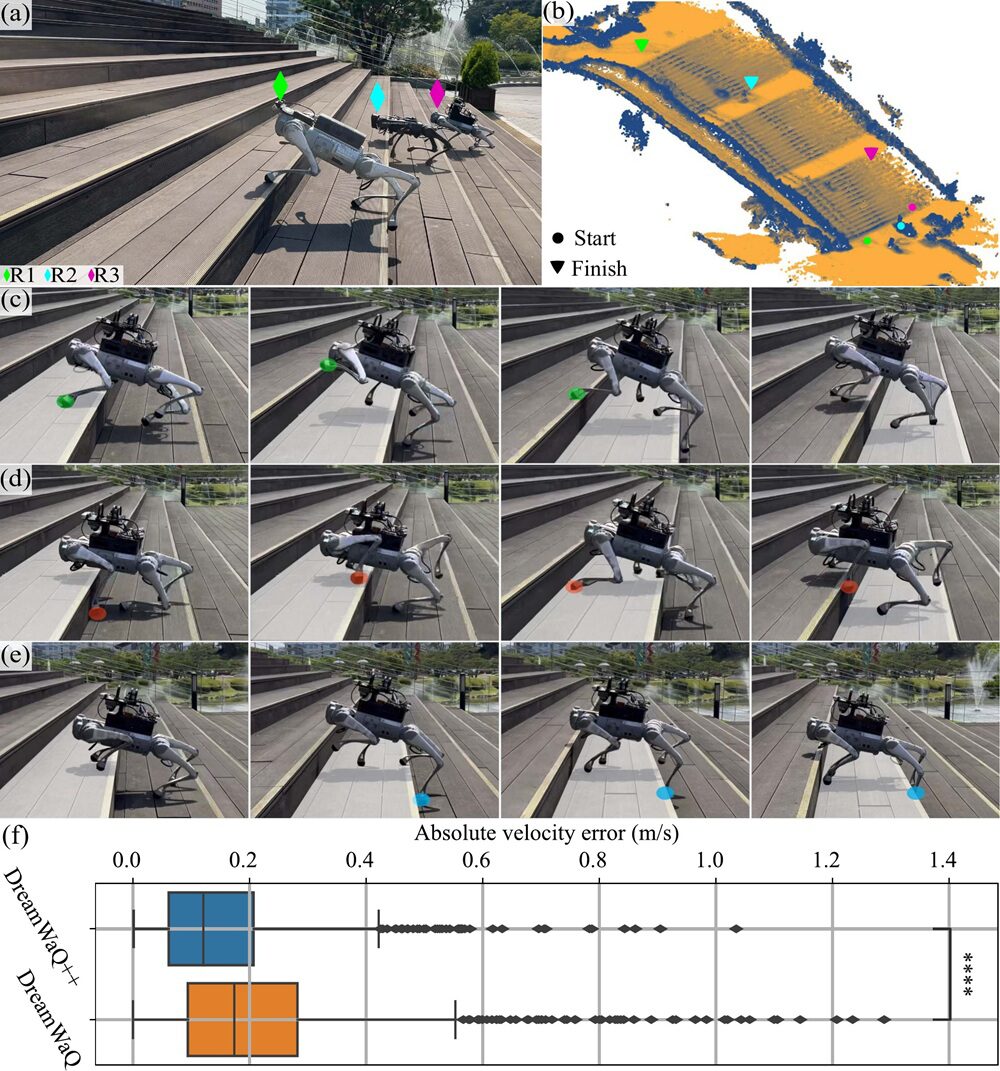

A EE research team has developed quadrupedal robot technology that not only enables walking by estimating terrain without visual information, but also allows the robot to perceive its surroundings through cameras and LiDAR sensors and make its own decisions while walking, much like animals that visually examine terrain and adjust their steps. This technology is also expected to be extended to various robotic platforms such as wheeled-legged robots and humanoid robots.

A research team led by Professor Hyun Myung from the School of Electrical Engineering, in collaboration with the lab’s startup EuRoboTics Co., Ltd., has developed “DreamWaQ++,” a quadrupedal robot control technology that recognizes terrain based on visual information and adjusts locomotion strategies in real time.

The previously developed “DreamWaQ” by this research team is a “blind locomotion” technology that estimates terrain using only proprioceptive sensing such as joint encoders and inertial sensors, enabling robust movement even without visual information. It allows stable walking even in environments where visual information is difficult to obtain, such as disaster situations, but has the limitation that the robot can only adjust its movement after its legs directly contact obstacles.

The newly developed DreamWaQ++ overcomes this limitation by combining proprioceptive sensing with exteroceptive sensing based on cameras and LiDAR. The key is that it enables “perception-based locomotion,” in which the robot recognizes obstacles in advance and proactively adjusts its walking strategy, going beyond simple reactive control to understanding and making decisions about the environment.

To achieve this, the research team designed a multimodal reinforcement learning architecture and implemented it to enable real-time control based on lightweight computation. In addition, it simultaneously secured stability by automatically switching to locomotion based on other sensory modalities when sensor errors occur, and scalability that allows application to various robotic platforms.

Performance was also demonstrated through experiments. The robot equipped with DreamWaQ++ showed performance surpassing existing technologies in various challenging environments.

In stair locomotion experiments, it completed a course of 50 steps (30.03 m horizontally, 7.38 m vertically) in just 35 seconds, outperforming both blind locomotion controllers and commercial perception-based controllers.

In steep slope environments, it stably climbed a 35° incline, which is 3.5 times steeper than the training condition (10°), and actively adjusted its posture to reduce the rear leg motor torque by approximately 1.5 times compared to existing methods.

In addition, in various obstacle scenarios, it demonstrated learning-based perception capability by autonomously selecting more efficient paths without separate path planning, and in uncertain drop terrains, it exhibited “exploration behavior,” where it voluntarily stops to inspect the ground before moving.

Along with this, it demonstrated high agility by overcoming obstacles of 41 cm, exceeding the robot’s height, even while carrying a payload of 2.5 kg. In simulation, it was shown that it can handle obstacles up to 1.0 m with ANYmal-C (a representative quadrupedal robot developed at ETH Zurich) and up to 1.5 m with KAIST HOUND (a quadrupedal robot developed by Professor Hae-Won Park’s group at KAIST).

In particular, even though it was trained only on relatively low obstacles (27 cm), it achieved a success rate of about 80% on actual higher stairs of 42 cm. This means that the robot is not simply repeating learned situations but has the ability to adapt to new environments on its own.

The research team expects that this technology can be applied in environments where conventional wheeled robots have difficulty accessing, such as disaster response, industrial facility inspection, forestry, and agriculture.

Professor Hyun Myung said, “This research shows that robots have advanced beyond simply moving to a level where they understand the environment and make decisions on their own,” adding, “We will further expand this into intelligent mobility technologies applicable in various real-world environments.”

This study was led by I Made Aswin Nahrendra (first author, current researcher at Krafton, KAIST PhD graduate), with co-authors Byeongho Yu (EuRoboTics Co., Ltd. CEO), Minho Oh (EuRoboTics Co., Ltd. CTO), Dongkyu Lee (EuRoboTics Co., Ltd. CTO), Seunghyun Lee (KAIST), Hyeonwoo Lee (KAIST), and Dr. Hyungtae Lim (MIT postdoctoral researcher). The study was published in February in the world-renowned robotics journal IEEE Transactions on Robotics (T-RO).

※ Paper title: DreamWaQ++: Obstacle-Aware Quadrupedal Locomotion With Resilient Multi-Modal Reinforcement Learning

※ link to original Paper: https://arxiv.org/abs/2409.19709

※ Videos demonstrating DreamWaQ++ operation and locomotion

- Main video

- Additional video

- Humanoid application video of improved DreamWaQ1

- Humanoid application video of improved DreamWaQ2

- Wheeled-legged robot application video of improved DreamWaQ

- Project page

This research was supported by the Korea Evaluation Institute of Industrial Technology (KEIT) under the Ministry of Trade, Industry and Energy (Project No. 20018216, “Development and Field Deployment of Mobility Intelligence Software for Autonomous Locomotion of Walking Robots in Dynamic and Unstructured Environments”), and by the Korea Forest Service (Korea Forestry Promotion Institute) through the Forest Science and Technology R&D Program (Project No. RS-2025-25424472).

Professor Choi is an internationally recognized scholar in wireless networking and mobile systems/computing. An IEEE Fellow recognized for his significant contributions to the field, he has held distinguished leadership roles in both academia and industry, including serving as a professor at Seoul National University and, most recently, as an Executive Vice President at Samsung Electronics.

His research has advanced intelligent network optimization, digital twins, low-latency mobile systems, and indoor localization. At KAIST, his work will focus on AI-native network architectures, autonomous network operations using LLMs and multi-agent systems, and next-generation infrastructure for physical AI.

Professor Choi’s office is located in N1 Room 616. For more details on his research, please refer to the website below.

► Visit Professor Sunghyun Choi’s Homepage(Click)

|

Education

Professional Experience

Major Publications

Vision

Research Plan

Assigned Course

|

Jo Yehhyun, a doctoral student in the School of Electrical Engineering, KAIST (advisor: Professor HyunJoo J. Lee), received the Excellence Award at the 4th Wonik Next-Generation Engineering Award hosted by the National Academy of Engineering of Korea (NAEK).

Jo Yehhyun was selected for the Excellence Award in recognition of developing a system that enables precise modulation and observation of brain function by integrating ultrasound neuromodulation technology, MEMS, and biosignal measurement technologies. As a leading researcher in ultrasound brain stimulation in Korea, she has contributed to the advancement of next-generation neuroengineering by publishing six SCI(E)-indexed first-author papers.

Jo Yehhyun stated, “I will continue to develop and validate technologies grounded in fundamental principles so that engineering innovations can reach real clinical and industrial applications, and strive to become a responsible researcher who contributes to society.”

The award ceremony was held on the afternoon of March 10 at the Grand Walkerhill Seoul Hotel in Gwangjin-gu, Seoul.

Hyo Eun Jeong, Integrated M.S./Ph.D. Student>

A new display technology has been developed that increases resolution while consuming minimal power. A research team led by Professor Young Min Song from the School of Electrical Engineering at KAIST, in collaboration with Professor Hyunho Jung’s team at Gwangju Institute of Science and Technology (GIST), has implemented a “monopixel” structure in which a single pixel can independently change its color to express a variety of colors while consuming almost no power to maintain them.

The “reconfigurable low-power reflective monopixel (reconfigurable Gires–Tournois resonator, hereafter r-GT)” developed by Professor Song’s team is a technology that uses electrochromic materials—substances that change color when electricity is applied—to generate color with low power consumption. Through this, the study opens a pathway toward creating clearer AR·VR displays without increasing battery burden.

Displays are continuously reducing pixel size to achieve sharper images. However, as pixels become smaller, power consumption increases and light intensity decreases. In particular, displays used in devices such as AR·VR, which are viewed at close range, must simultaneously satisfy the requirements of extremely small pixel size and low power consumption, making them difficult to realize.

The r-GT pixel developed by the research team changes color when voltage is applied, and once changed, the color is maintained for a certain period even after the voltage is removed. In other words, power is required only when changing the color, while almost no power is needed to maintain it.

The core of this technology lies in two key elements. First is the conductive polymer “polyaniline (PANI),” whose properties change when electricity is applied. This material responds even at low voltages below 1 volt (V), and its refractive index changes, resulting in a change in color. The refractive index indicates how much light bends when passing through a material, and when this value changes, the perceived color also changes.

Second, the researchers combined a resonator structure that reflects light multiple times to enhance specific colors. This structure amplifies even small changes, enabling vivid color expression with low power consumption.

As a result, a wide color variation exceeding 220° was achieved with ultra-low power consumption of 90 μW cm⁻². In simple terms, with only about 0.00009 W per 1 cm², it is possible to represent more than half of the full color wheel (360°).

Another important feature is the “monopixel” structure. Conventional displays divide a single pixel into red (R), green (G), and blue (B) subpixels to create colors. In contrast, a monopixel allows an entire pixel to independently change color and express a variety of colors. Because it does not require subpixel division, more pixels can be implemented within the same area, leading to higher resolution and reduced light loss, resulting in clearer images.

In addition, PANI has the property of maintaining its color state for a certain period even after the applied voltage is removed. This confirms the feasibility of implementing a “memory-in-pixel” display, in which power is used only when changing colors and almost no power is required to maintain them.

The research team confirmed that the technology can achieve a wide color tuning range of 220.6°, and that pixel size can be reduced to approximately 1.5 micrometers (μm). This corresponds to an ultra-high resolution of up to about 16,900 PPI, a level at which individual pixels are difficult to distinguish with the human eye.

Furthermore, even with a single-pixel structure, it was possible to reproduce about 48.1% of the standard color gamut (sRGB), and by diversifying material combinations, richer color expression of up to approximately 69.9% was demonstrated.

The team fabricated a 5×5 monopixel array to verify performance. The energy required to change color was extremely low (2.31 mJ), confirming that colors can be expressed with up to 5.8 times less power compared to conventional LEDs. In addition, as a reflective display that utilizes external light, it was also confirmed that visibility improves under brighter ambient lighting conditions.

This study demonstrates that full-color implementation is possible with ultra-low power by combining electrochemical materials with optical resonator structures. The technology is expected to be applied to various fields where energy efficiency is important, including ultra-high-resolution near-eye displays for AR·VR, wearable devices, outdoor information displays, and electronic paper. It also suggests the potential for development into sustainable and energy-efficient display technologies by minimizing power consumption while maintaining color.

Professor Young Min Song stated, “This technology was developed to enable a wide range of colors using only a very small amount of electricity. When combined with display driving methods, it can be applied not only to ultra-high-resolution displays with lower power consumption but also to various optical technologies.”

This research was co-first authored by Hyo Eun Jeong, an integrated M.S./Ph.D. student at KAIST, with Professor Young Min Song serving as the corresponding author. The results were published online on February 28 in Light: Science & Applications (Impact Factor: 23.4).

※ Paper Title : Sub-1-volt, reconfigurable Gires-Tournois resonators for full-coloured monopixel array

※ DOI : https://www.nature.com/articles/s41377-026-02228-2

This work was supported by the Ministry of Science and ICT and the National Research Foundation of Korea (NRF), the InnoCORE-GIST program, the Nano and Materials Technology Development Program, the Future Medical Innovation Technology Development Program, the Global Research Collaboration Hub Program, and the Bio-Industry Technology Development Program funded by the Ministry of Trade, Industry and Energy (MOTIE) and KEIT.

Hyungki Ahn, an alumnus of our School (B.S. Class of ’94, M.S. ’98, Ph.D. ’00, Advisor Bum-seop Kim), has been promoted to Executive Vice President in the Hyundai Motor Company executive reshuffle announced on March 27, 2026.

Executive Vice President Ahn is a classically trained engineer who completed his bachelor’s through doctoral degrees at the School of Electrical Engineering, KAIST. He is a key leader driving the transition toward vehicle electronic control and Software Defined Vehicles (SDVs) within Hyundai Motor Group.

Major Achievements and Expertise

- Leading Future Mobility Innovation: As Head of the Electronics Development Center in the Advanced Vehicle Platform (AVP) Division at Hyundai Motor Company, he has overseen the development of next-generation electronic architectures and integrated controllers that serve as the “brain” of vehicles.

- Global Recognition of Technological Excellence: He led the development of the Central Communication Unit (CCU), a core in-vehicle communication system, earning the 2022 PACE Award—often referred to as the “Oscars of the automotive industry”—thereby showcasing Korea’s software technology on the global stage.

- Realizing the SDV Vision: He has spearheaded the advancement of connectivity technologies such as over-the-air (OTA) software updates and Digital Key 2, innovating user experience and bringing Hyundai’s future mobility strategy into reality.

This promotion is regarded as part of Hyundai Motor Company’s strategic move to strengthen its capabilities in autonomous driving and future mobility technologies by appointing experts in these fields to key leadership positions.

We sincerely congratulate Executive Vice President Hyungki Ahn, who continues to bring honor to his alma mater while contributing to transforming the paradigm of the global automotive industry. Related Article: https://n.news.naver.com/article/009/0005656970

| ※ If you would like to share alumni news, please submit it via email to Mr. Bansuek Lee, in charge of public relations for the EE (ws0709@kaist.ac.kr). |